Short version: a context store is a replicated, pre-indexed data layer that lets AI agents search enterprise data without hitting source APIs every time the model wants to know something.

Most teams don't build one. They plug the agent into Salesforce and Zendesk, watch a clean demo, ship it, and then production shows up. That's usually when somebody starts asking what a context store is.

TL;DR

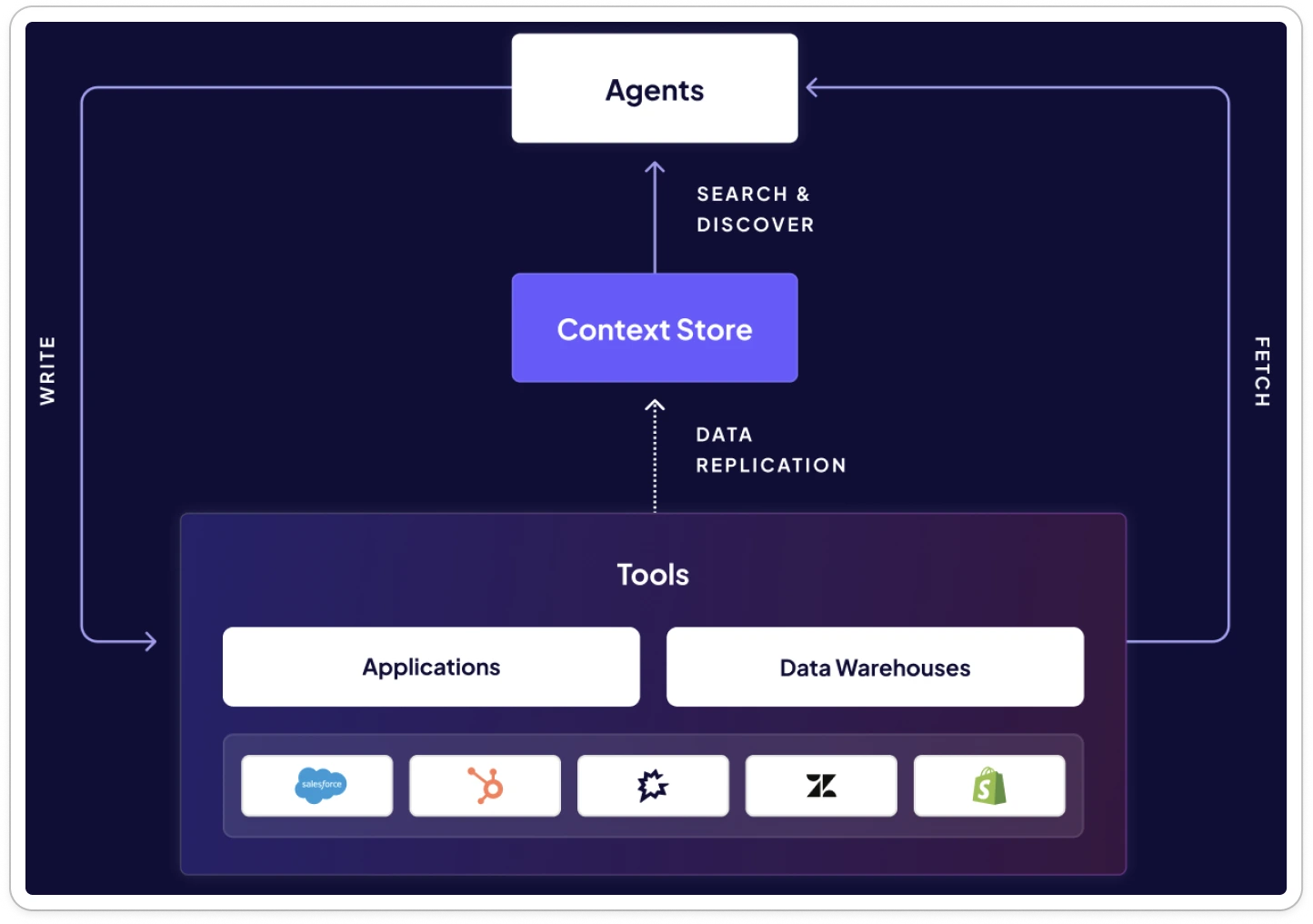

- It's a replicated, indexed, curated copy of your enterprise data. Agents search it. They don't call source APIs to read.

- Direct API access is fine for a demo and falls apart at real scale. Latency stacks. Rate limits hit. The context window fills up before the agent says anything useful.

- Built specifically for agent workflows. Cross-source search that lets the agent resolve entities across systems. Curated fields. Natural language search over structured records. A cache won't do this. A vector DB won't either.

- Right architecture pairs the store (for discovery and search) with direct API access (for fetch and write). One layer knows. The other layer acts.

What Are the Most Important Properties of a Context Store?

Wire the agent straight to Salesforce, Zendesk, and Slack and you've signed up to handle pagination, rate limits, auth, and response parsing on every single call. A context store takes that work off the table. It replicates a curated slice of source data into managed storage. The agent searches that storage in milliseconds.

Three properties make this work. None of them are negotiable.

Proactive Data Replication

The store pulls the data before the agent asks for it. Connectors extract and sync on a schedule (hourly is the typical knob), so the data is sitting there waiting when the query lands.

That decouples agent performance from source API performance. Vendor having a bad day? Rate-limiting? Down? Doesn't matter. The agent's search never touches their API during a query.

Pre-Indexed for Agent Query Patterns

A query that spans three SaaS tools shouldn't have to fan out into three different API calls in three different query languages. Replicated data gets indexed for how agents actually search: natural language, entity lookup, joins across systems.

Ingestion converts raw API responses into something agents can use. Fields that matter for search (customer names, deal sizes, ticket metadata, contact details) get indexed selectively. Nobody dumps the whole database into the index. Query time is pure retrieval. The heavy lifting happens in the background where the user can't feel it.

Sub-Second Agent-Optimized Retrieval

"Find all customers closing this month with deal sizes greater than $5,000."

Context store: under a second.

Without one: a sequence of paginated API calls that can run minutes long. Forty seconds in, the user already closed the tab.

This is not the same as caching, and the distinction matters. A cache stores raw API responses so repeat calls hit faster. It speeds up things the agent already knows how to look up. A context store does something different. It indexes data from many sources into one searchable layer so the agent can answer questions that cross systems, match entities, or need natural language interpretation. Cache = known lookups, faster. Context store = unknown lookups, possible.

Why Do AI Agents Need a Context Store?

Three forces push teams here, and once you've felt one, you usually feel all three: API scaling limits, data discovery, and cross-system entity resolution. Each one compounds the others.

Direct API Access Does Not Scale

For known queries against known endpoints, Model Context Protocol (MCP) servers and tool calling work fine. Fetch a Salesforce contact by ID. Update a Zendesk ticket. No problem.

The pattern starts cracking the moment the agent has to discover data across systems, search large record sets, or join sources together. Every API call piles on latency. A Microsoft architecture comparison clocks MCP overhead at 100–300ms per tool invocation compared to direct REST. Each call burns tokens. Each call risks a rate limit. Ten sequential tool calls is one to three seconds of latency from MCP alone, and the model hasn't done any of its actual reasoning yet.

The math compounds with every source you add. Most agent projects start by wiring up a few tools and letting the agent fetch what it needs on the fly. The demo works. Team ships. Everybody high-fives.

Then the production scale arrives. Airbyte's CEO Michel Tricot put it directly: "That confidence doesn't survive contact with production scale." Complex natural language queries fan out into paginated calls and large-dataset filtering. Context window growth follows. Rate-limit errors follow. Customer trust follows them out the door.

The bigger the scale, the worse the math. A Cloudflare example shows that without response filtering, one large API can chew through 1.17 million tokens over MCP. Even with server-side filtering, the same Azure benchmark has MCP running 15–25% slower than REST because of JSON-RPC overhead, while serving 50–80% fewer LLM tokens. Those savings help. They don't change the underlying math for production agents handling unpredictable questions from a lot of different customers. If you can't predict the query, you can't shrink the call chain.

The Discovery Problem

This is the part nobody talks about until they get burned by it.

Agents on direct API access can only retrieve data they already know exists. Exact IDs. Specific endpoints. Precise query parameters. Source APIs were designed for human developers reading docs, not autonomous agents poking around for what's available. They give you ID-based retrieval. They don't give you discovery.

Try this prompt: "Find every customer who had a failed charge last week and also opened a support ticket."

The agent has to query the billing API for failed charges (assuming it knows /charges exists), pull customer IDs out (assuming it understands the schema), query the support API with those IDs (assuming it knows the ticket query format), and reconcile timestamps across two systems with two different time formats. Each step assumes knowledge the agent doesn't actually have through API discovery.

As Airbyte's analysis of RAG in agentic AI puts it: "What the agent actually needs is a unified layer that resolves and aligns entities so it can search across everything at once."

So the agent stalls. Not because the data is gone. Because it has nowhere to start. A context store flips the model. It replicates, indexes, and makes source data searchable up front, so when the question hits, the agent searches an existing index in under a second instead of probing endpoints. Discovery stops being the bottleneck. Every new source you connect makes the agent smarter instead of slower.

Entity Resolution Across Sources

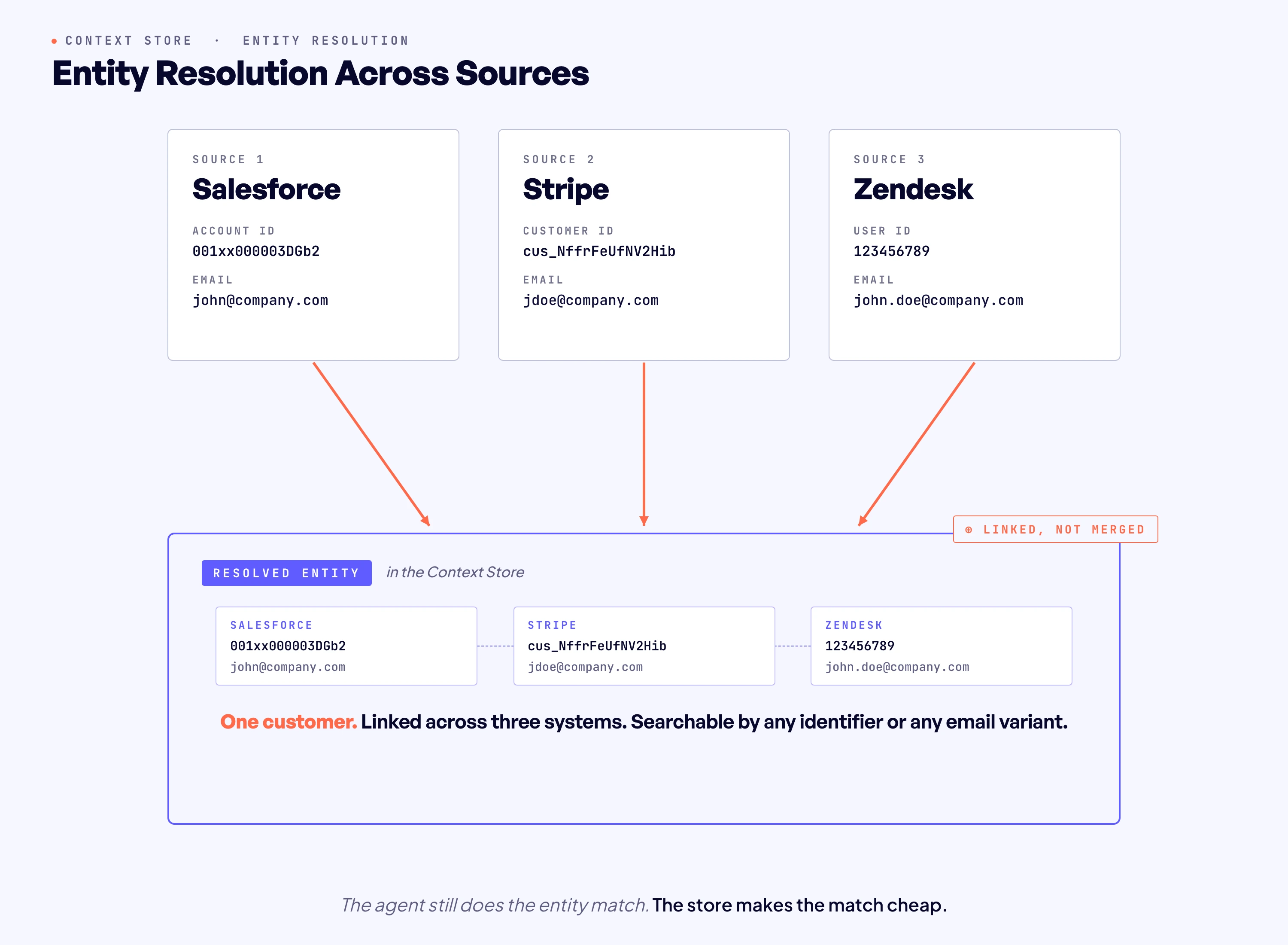

The same customer is a different person in every system you check.

Three IDs. Three email variants. One actual human.

Direct API access returns each system's native record with zero cross-system linking. An agent hitting these systems independently has to do the matching itself, in real time, while the user waits. Three sequential lookups. Three pagination loops. Three different schemas. Resolution math running in the context window before the agent has answered a thing.

A context store changes what that work costs. Each connected source gets its own pre-replicated, indexed copy that the agent can search in milliseconds. The agent still does the entity match. That's reasoning work, not replication work. But now it does it against fast, indexed stores instead of three live APIs. Search by email across CRM, billing, and support, scan the candidate records each store returns, stitch the picture together without burning a tool call per pagination page.

Without this layer, every cross-system question is one the agent either gets wrong, gets slowly, or gives up on.

How Does a Context Store Differ from a Vector Database, Data Warehouse, or RAG Pipeline?

This is where we see the most expensive mistakes. Teams reach for a vector DB or a warehouse, call it the context layer, and don't realize the gap until the agent's been in production a few weeks.

These tools aren't competitors. They're complementary. The trouble is when one gets cast in a role it was never built for.

How Does a Context Store Work Architecturally?

Three layers. Each one assumes the one below it.

Replication Layer

Bottom layer. Connectors pull data from source systems on a schedule, the same plumbing data teams have used for ETL for years. The difference is the destination. The data lands in an agent-optimized index, not a warehouse.

This layer ingests raw, validates, normalizes (dedup, format alignment), and outputs a curated dataset agents can actually use. Not every field. Not every source. Only what matters for agent search ends up in the store. The rest stays where it was, reachable through direct API calls when an agent specifically needs it.

Index Layer

The middle layer turns replicated data into something queryable. Field selection is curated, not exhaustive. The fields and entities most useful for cross-source search (customer names, deal sizes, contact details, ticket status, account IDs) get indexed. Bulk attachments, raw audit logs, and config tables stay in the source system where they belong.

What the index doesn't do matters just as much. It doesn't decide whether "revenue" in your CRM means the same thing as "revenue" in your ERP. It doesn't merge three customer records into one. It doesn't tag fields with business semantics. Those are reasoning calls, and they belong to the agent. The index layer's job is making that reasoning cheap. Structured search, filter operators, sorting, pagination, results in milliseconds. The agent uses the index to ask better questions of cleaner data, not to skip the thinking.

Query Layer

Top layer. No pagination. No rate limits. The agent calls a search operation and gets structured results back from every connected source. The query layer takes natural language in, picks a retrieval pattern (semantic search, exact match, graph traversal, some combination), and returns hits in milliseconds.

The whole three-layer shape works like this: replication for discovery and alignment (the store itself), fetch for timely action (direct connectors pulling latest state right before an agent acts), write-back for execution (update the ticket, send the message, create the record).

The store handles "knowing." Direct API access handles "doing." That split is the actual product insight, and most teams who skip the context store layer skip it because they haven't thought about that split yet.

For most workflows, data the store replicates inside the hour is plenty fresh for search and discovery. True freshness only matters at the moment of action, and at that moment the agent fetches from the source system directly before it writes.

How Does Airbyte's Context Store Work?

Most teams hit the same wall. Demo worked. Integrations looked clean. Production showed up, the questions got harder, the datasets got bigger, the API calls started stacking up. That's the moment the missing layer goes from theoretical to obvious.

Airbyte Agents was built for that moment. At the center is the Context Store: a managed, searchable replica of the entities agents actually need. Customer records. Deals. Tickets. Contacts. Raw attachments and audit logs and config data don't get replicated. They stay in the source system, where they belong, accessible through direct API access if and when a task needs them.

Every connected source gets its own isolated store, scoped to the organization. The store refreshes hourly or daily depending on the Airbyte plan, so the agent works from a consistent, governed snapshot instead of racing against live API state.

Each connected source surfaces through an Airbyte agent connector, and each connector exposes three operations: discover, fetch, write.

Discover runs against the pre-indexed Context Store replica and returns in under a second. That's how the agent filters and searches over large record sets without ever calling the source API. Search only. Writes never touch the Context Store, by design. They route through the source API every time. Fetch and write go straight to the source. Fetch pulls the current state of a specific record right before an action that depends on freshness. Write commits the change back. The agent picks the right operation for the task. Teams building on the SDK can call context_store_search directly for granular control over filters, sorting, and pagination.

So: Context Store owns the "knowing" problem. Direct connectors own the "doing" problem. Neither tries to do both, and that boundary is the whole point.

The amount of work this takes off the engineering team is real. Auth, ingestion-time schema validation, query-time permission enforcement, all built in. The connector infrastructure underneath has been running since 2020, 1.2 million pipelines a day, thousands of companies.

That same foundation now powers 50+ agent connectors shaped specifically for how agents discover, fetch, and write. The team building the agent doesn't end up secretly building a mini integration platform underneath it.

For setup, status monitoring, plan-specific refresh cadence, and the full SDK query model, see the Context Store docs.

Why Does the Context Store Matter for Production Agents?

The gap between demo agents and production agents isn't a model gap. It's a data-access gap.

Demo agents work because they query small, known datasets through a handful of API calls. Production agents break because they get unpredictable questions across many systems holding millions of records. Rate limits. Latency stacking. Context bloat. Missed entity matches. All of those failure modes lead back to the same missing piece. A context store fills it. It hands the agent a pre-built, cross-source searchable layer it can match entities against in milliseconds, regardless of how many sources sit behind it.

Airbyte's launch benchmarks, comparing Airbyte Agent MCP to native vendor MCPs, showed agents using the Context Store made 40% fewer tool calls and used up to 80% fewer tokens overall. Per source: 90% on Zendesk. 80% on Gong. 75% on Linear. 16% on Salesforce.

Airbyte Context Store is the substrate for production agents. A unified, permission-aware layer fed by 600+ replication connectors and acted on through 50+ Agent Connectors handling real-time fetch and write into source systems. Read-only agents can describe the work. Agents that can write can actually do the work.

Whether the team builds with the Agent SDK, queries through the Agent MCP, or composes flows in Automations, every agent runs off the same picture of the business with the same freshness and the same permissions.

Get a demo to see how Airbyte Agents gives your agents fast, governed search across enterprise data.

Frequently Asked Questions

How is a context store different from a cache?

A cache returns the same raw API response faster on a repeat call. It can't answer a question the agent hasn't already asked, and it can't combine data from multiple sources into one answer. A context store does both, because it indexes and links data across systems before the agent sends a single query.

Does a context store replace MCP servers or direct API access?

No. The Context Store handles search and nothing else, by product design. MCP servers and agent connectors do the rest: fetching fresh state right before an action, and committing writes back through direct API calls. Two different jobs, two different runtimes.

How fresh is the data in a context store?

Airbyte Context Store refreshes hourly. For anything that needs absolute current state (live ticket status, current inventory, etc.), the agent fetches directly through agent connectors alongside the store.

What data goes into a context store?

Not all of it. Airbyte picks the slice that's relevant to search: customer records, deal information, ticket metadata, contact details, project attributes. Raw file attachments, historical audit logs, and config data usually stay in the source system, reached through direct API access when something specifically asks for them.

How long does the Context Store take to populate?

First indexing depends on data volume and source API rate limits, anywhere from minutes to days. Agents can query partially-populated entities the whole time, so the team isn't blocked from building while it runs. After the initial pass, scheduled refreshes are routine, hourly to daily depending on the plan.

Can I build my own context store?

Yes. The shape: replicate data from each source into a search-optimized store, build entity resolution, build a query interface on top. Underneath that you're managing connectors per source, an indexing pipeline, cross-system entity matching, and scheduled replication to keep freshness honest. Airbyte ships all of it as managed infrastructure so you don't end up maintaining each piece yourself.

Try Airbyte Agents

We're building the future of agent data infrastructure. Be amongst the first to explore our new platform and get access to our latest features.

.avif)