The General Data Protection Regulation (GDPR) is the toughest privacy and security law in the world. It imposes obligations onto organizations that target or collect data related to people in the EU. The GDPR has resulted in harsh fines against those who violate its privacy and security standards, with penalties reaching into the tens of millions of euros. According to the European Commission, Pseudonymisation is the standard requirement for data used in statistical production, as stated in the following text:

Whenever Administrative data are used in statistical production Pseudonymisation is the standard requirement for dataEUR-LEX , REGULATION (EU) 2016/679, 2016). The information, which allows Re-identification of persons from pseudonymised data, should be kept separately from the use of the data for statistical purposes. Examples of personally identifiable information (PII) according to the European commission are:

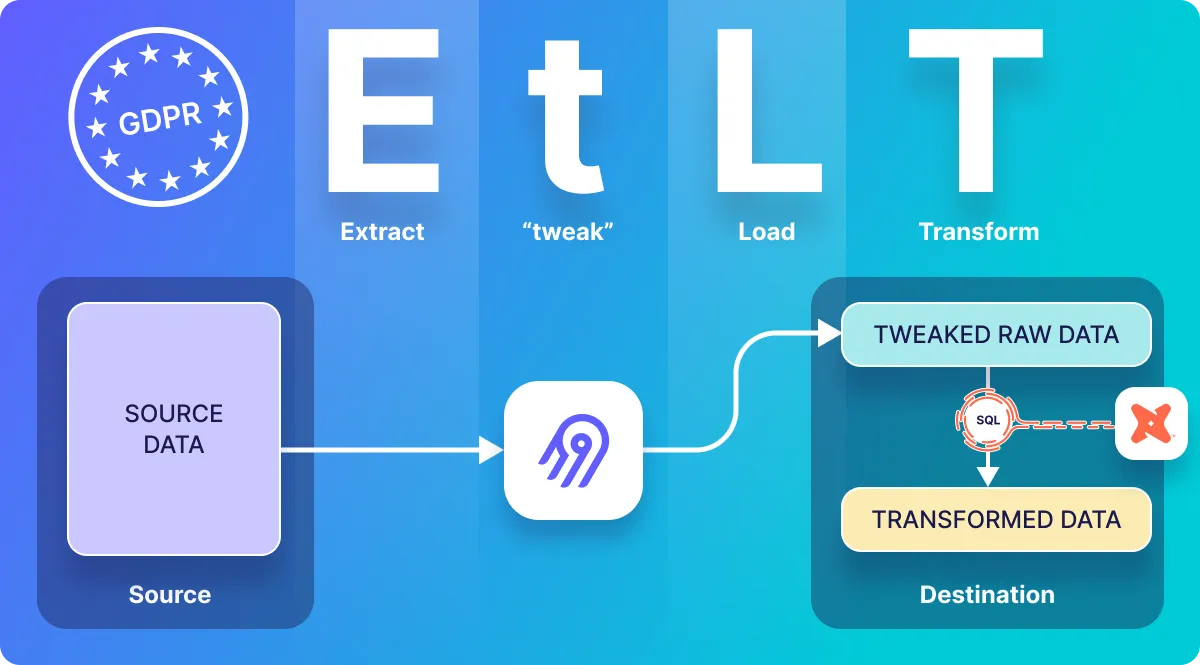

Name Address ID card/passport number Internet Protocol (IP) address Etc. Airbyte is an ELT tool – in which raw data is often stored untouched in the destination as demonstrated in the following image:

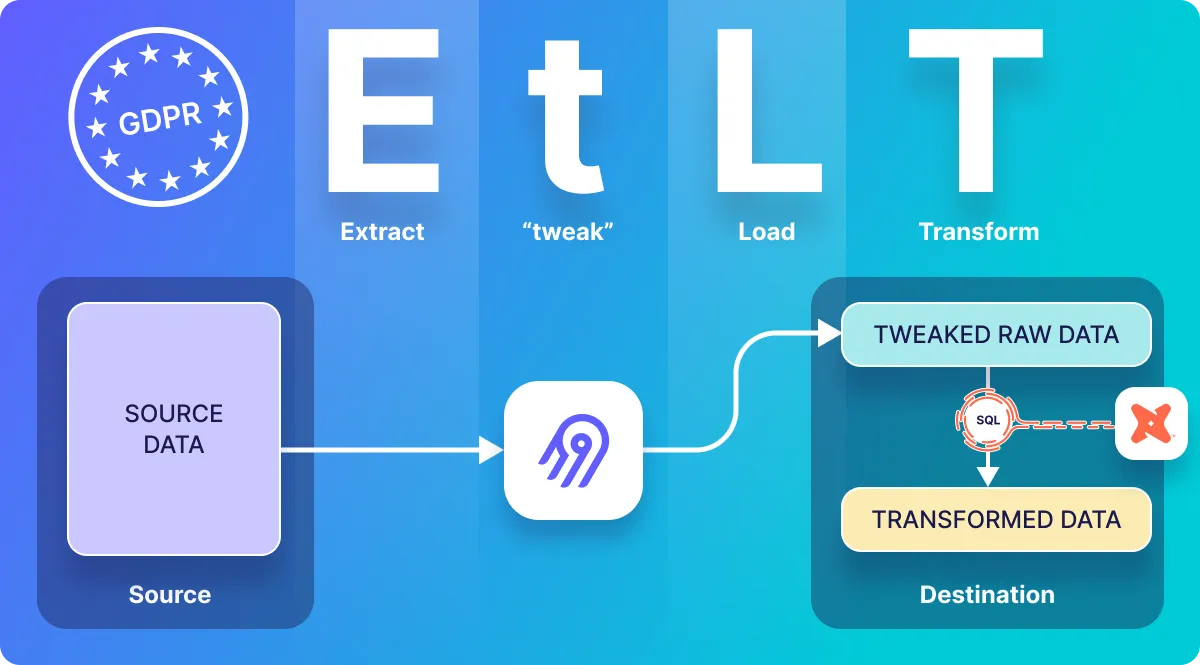

ELT data integration However, being GDPR compliant may require obfuscation or removal of some data before it gets loaded into a data warehouse. This can be achieved with an EtLT (Extract, “tweak”, Load, Transform) approach to data integration – in which a very light “tweak” (small “t”) transformation is done on the data before it reaches the destination, as demonstrated in the following image:

EtLT data integration Airbyte has recently introduced a low-code connector framework , which enables you to build source connectors for REST APIs by modifying boilerplate YAML files – and in this tutorial you will learn how to incorporate “tweak” transformation functionality into a low-code connector configuration to do the following:

Pseudonymise sensitive fields Remove sensitive fields Remove sensitive records that should not be sent to the destination Limitations:

This is not a comprehensive overview of GDPR or GDPR compliance tools. This is not a tutorial about how to create a low-code connector – there are other articles available for that. “Tweak” functionality is not yet generically applicable to pre-defined/pre-existing connectors – it is limited to connectors that you create with the low-code framework. This tutorial uses the Python interpreter to validate the connector – it does not take you through the steps to fully dockerize your source connector. You are about to learn how to use Airbyte as a tool to improve GDPR compliance. Let’s get started!

Initial setup Copying and pasting from the code presented in this article will provide you with a running example that can then be extended with “tweak” transform functionality.

Test out the PokeAPI To keep this tutorial simple, I make use of PokeAPI as a data source – the low-code connector presented in this article is a low-code version of the connector presented in the Python CDK Speedrun .

Before getting into the Airbyte connector definition, you may first wish to explore the PokeAPI API. For example, you can see the JSON for the ditto Pokemon by using cURL to send an HTTP request to PokeAPI, along with jq for parsing json responses as follows:

Which responds with much more information about the ditto Pokemon than can fit on a page. This response can be filtered down by using jq to display just a few of the attributes that are of interest. For example, you could use the following command to display only the types , name , and abilities that are returned from the above API call:

The response looks as follows:

Create a simple low-code connector In this section, I present the code that you can cut and paste so that you can have a running low-code connector that pulls data from the PokeAPI. This will be used as a basis for demonstrating GDPR-related functionality that is available when creating a low-code connector. The instructions presented below follow the same steps as the low-code getting started documentation .

Generate a source connector project:

Run the generator to create scaffolding for a new low-code (YAML) connector:

Select the Configuration Based Source as shown below:

Select a name for your connector. I have chosen gdpr-demo . Go to the newly created directory and setup your environment:

Modify source_gdpr_demon/spec.yaml which defines the user inputs required by the connector, so that it looks as follows:

The file secrets/config.json is used to specify the user inputs that are passed to the connector – which is required during low-code development since you are not (during development) making use of the full Airbyte UI for accepting inputs. Copy the following into that file:

Create a low-code connector to pull information about a Pokemon from the PokeAPI by copying the following YAML into source_gdpr_demo/gdpr_demo.yaml so that it looks as follows:

Copy this Pokemon schema into source_gdpr_demon/schemas/my_pokemon_example.json .

Modify integration_tests/configured_catalog.json to look as follows:

Test the new connector After the above has been implemented, you should be able to execute the read command to receive information about the “ditto” Pokemon as follows:

The above should respond with information about the “ditto” Pokemon embedded inside of a record object. To view just the same fields as you viewed when calling the API directly from cURL earlier in this tutorial, the response can be filtered using jq as follows:

Which should respond with the following:

From the above response, you can see that the name field looks as follows:

Airbyte transformations The steps presented so far are designed to get you to a point where you can test out the transformation functionality that is available in Airbyte’s low code connector development kit . Now that you have a simple functioning low-code connector, you are ready to test out different ways of tweaking your data before it is sent to the destination.

Pseudonymisation of a field Let’s assume that the Pokemon name is considered personally identifiable information and should not be copied into an Airbyte destination. You can pseudonymise the name by using an AddField transformation in the connector’s YAML specification (in my case located in source_gdpr_demo/gdpr_demo.yaml) that passes the name through a hash filter (which was recently added to the low-code framework) and that is called inside a Jinja template .

Add the following transformation to the connector:

The entire specification, which includes the transformation is shown below:

The connector can be tested as before:

And the response with the name hashed, looks as follows:

From the above response, you can see that the name is now a hash rather than ditto :

This is because the AddFields transformation has replaced the name field with the md5 hash of ditto . If you want to confirm that this is indeed an md5 hash of ditto , you can use a command line tool to calculate the hash of “ditto” as follows:

Which responds with 13d9173fd8c031b139be36976e39614a as expected.

However, this highlights a potential weakness in using a hash for pseudonymization – hashing is consistent for a given input, and therefore the same hash algorithm applied to the same input data will produce the same result. This means that if the name “ditto” has been hashed on other databases, or in other companies, or by an attacker, it can be cross correlated with the hash that you have just calculated. Because of this, it is often desirable to add a salt to the creation of the hash, so that the calculated hash will be consistent for every appearance of ditto within your system, but so that this hash cannot be easily cross-correlated to hashes that have been created by other systems.

The secrets/config.json file that you created above defines a field called my_secret_salt which can be passed into the hash function to provide the salt so that hashes created by your system do not match hashes created in other systems. Modify the transform in the connector YAML to include a salt as follows:

You will see that the name that will be sent to the destination system is different than without the salt. In my case, the calculated hash now looks as follows:

Removal of a field In some cases it may be preferable to to completely drop a field from the data before it is sent to the destination. This can be achieved with the RemoveFields transformation . For example, imagine that all of the URLs within the abilities sub-object are considered sensitive information that should be removed. This achieved by adding the following YAML to the connector definition:

The full connector YAML is given below:

Test the connector as before:

Airbyte has removed the URLs from the abilities (but not from the types ), and the response should look as follows:

Removing fields that match a glob pattern Imagine that regardless of which level a name appears, it should be removed from the data. This can be achieved with the following transformation, which recurses into all sub-objects searching for a field called name :

Or perhaps you wish to recursively remove every field that starts with the letters g-e-n. – i.e. generation-i , generation-ii , generation-iii , etc. This can accomplished with a wildcard: “gen*”, as shown below:

Dropping a sensitive record Entirely dropping a record if it meets some criteria may be useful for GDPR compliance.

In order to leverage the low-code connector that you just created, this section presents a slightly contrived example, which treats each Pokemon ability as if it were a unique record. This is accomplished by setting the field_pointer to [abilities] .This tells Airbyte to return each object within that array as a record.

The low-code YAML that treats each ability as if it is a separate record is given below and can be copied into source_gdpr_demo/gdpr_demo.yaml :

Execute the following (without jq to make it more concise and clear that multiple records are returned):

Which will respond with the following, that includes 2 records – one for each ability:

You can add a transform to filter records that meet a particular criteria. Let’s imagine that any ability with a value of imposter should not be sent to the destination. This can be accomplished by modifying the record_selector in the low-code YAML in source_gdpr_demo/gdpr_demo.yaml to look as follows:

And running the connector again:

Which should respond with the following:

Which confirms that the record corresponding to the imposter ability has been removed from the results, and is not sent to the destination.

Conclusion GDPR compliance is becoming increasingly important, and may require modifying data before it gets stored in a destination system. This tutorial has demonstrated several examples of how you can improve GDPR compliance of custom connectors that you develop using Airbyte’s low-code connector framework. This included pseudonymisation (hashing) of sensitive fields, removal of sensitive fields, and removal of sensitive records.

If you are looking to dive deeper into Airbyte, you may be interested in other Airbyte tutorials , or in Airbyte’s blog . You may also consider joining the conversation on our community Slack Channel , participating in discussions on Airbyte’s discourse , or signing up for our newsletter .