Collectively, organizations use thousands of platforms, databases, file systems, and other data silos to run their businesses. Every silo contains a partial view into the business, making it nearly impossible to make data driven decisions without integrating the data sources into a single view. Given the breadth of possible integrations today and in the future, this presents a monumental engineering challenge.

Airbyte was created to solve this problem. Our founding thesis is that the only way to fully solve data movement is via an extensible, decentralized, open source approach . Therefore, we see our role as that of enabling the building and maintenance of thousands of data connectors with minimal effort. To facilitate creating and maintaining connectors we have created both a Connector Development Kit (CDK), so that developers write less code for each connector, and a suite of Connector Acceptance Tests (CAT). Connector Acceptance Tests are the focus of this article. CATs are black box, platform-agnostic test suites that validate a connector “behaves correctly” and follows best practices.

The ideas in this article can be summarized as follows:

Airbyte wants to minimize the effort to build & maintain thousands of robust, high quality data connectors . Building robust software requires automated testing techniques like black box testing: before shipping something to the user, we must verify it behaves correctly under both “happy” and adverse conditions. For Airbyte connectors to behave correctly, they must follow the Airbyte Specification: a set of common rules and behaviors which allow any pair of connectors to “talk to each other” via Airbyte. Since Airbyte’s goal is enabling thousands of data connectors, we can significantly reduce the developer effort required for testing by providing a set of prebuilt test suites (CATs) which can be used by every connector, regardless of the data silo it connects to or its implementation language. In our experience, CATs have provided us with a lot of quality and velocity advantages. We’ll discuss those and the lessons learned. While CATs provide immense value, they are not comprehensive. We discuss their limitations and the need for additional testing to verify connector quality. Defining correct behavior: implementing the Airbyte specification The Airbyte Specification describes the rules and behaviors which allow any pair of data connectors to “talk to each other” via Airbyte. As a quick recap, there are two types of connectors in Airbyte:

Source connectors: They can extract data from an underlying API or database. Destination connectors: They can write data to an underlying API or database.For simplicity, we’ll only discuss source connectors for the rest of this article. But the same ideas and lessons apply to destination connectors as well.

Any source connector that implements the Specification correctly can work with any destination connector which implements the Specification correctly. This is super valuable as it means a connector can be developed without any knowledge of which other connectors it will be used in conjunction with. As long as everyone plays by the Specification rules, all is well.

At a high level, the Specification requires a source connector to be able to do four main things though a CLI interface: describe the required user inputs, verify the user inputs, describe the output schema, and export the source data.

Describe the required user inputs For example, the Mailchimp connector would declare it needs a valid Mailchimp API key to run. This description of inputs is known as the Connector’s Specification .

A connector which correctly implements this operation then should behave as follows:

If a user asks the data connectors for its specification, it should return a valid JSON description of the possible input parameters. The returned JSON should use only data types allowed in Airbyte’s type system. Verify the user inputs If I input a valid Mailchimp API key, the connector should tell me that it can use that API key to function correctly. Otherwise, it should give me a comprehensible error message that says I need to provide a valid API key before I can proceed. This verification of user inputs is known as the “Connection Check operation” .

Some correct behaviors one would expect then are: if I provide an incorrect set of user inputs to a connector (e.g: invalid API key, no API key at all), it should not allow me to perform any operations until I’ve provided a correct set of inputs. And if I provide a correct set of inputs, the check connection operation should succeed. In addition it should be able to use these inputs to read data from the underlying source.

Describe the output schema For example, the Mailchimp API might output a table called “campaigns” which contains two columns, “campaign_id”, an integer, and “campaign_name”, a string. This is helpful when writing data to a typed database like Snowflake or Postgres which needs to know the type of each column in each table. This operation is called “schema discovery” .

Export the source data When given a valid user configuration and a selection of which tables to export the source data should be streamed. This is called the read operation.

Correct behaviors then include: when exporting data, the connector should only output data from the tables the user asked for. Also, all the records output should have the same data types described in the schema discovery phase.

Beyond correctness: testing best practices The above test cases are meant to guarantee correctness, but we don’t have to constrain ourselves to just that. We can even test for general best practices and good patterns. For example, getting the connector’s specification, running connection checks, or performing schema discovery are all user facing operations. When they run, a user is typically waiting at the keyboard or UI for their results. So they need to be as fast as possible. While that’s not a requirement for “correctness” per se, we can still say that we’d like every connector to run these operations in under 5 seconds, unless there is a strong reason it cannot.

One way to verify these behaviors and best practices would be to tell every connector developer to implement them as part of unit & integration testing. But that would be incredibly inefficient for many reasons. First, it’s a drag on velocity. Second, it would result in lower quality tests, because it’s unlikely that every connector developer will have thought of every edge case. Finally, it does not snowball lessons from one connector into all the others: if tomorrow we discovered a new test case all connectors must pass, there is no easy way to propagate that test case to all connectors.

In short, implementing test cases individually for each connector would be an extremely low leverage way to reach thousands of supported connectors in the Airbyte ecosystem. To solve the problems above, we’ve developed Connector Acceptance Tests (CATs).

How to write CATs In short, CATs are a set of test suites which are:

Modular: you can select which tests you want to run, and how many times with which inputs. Language agnostic: they work regardless of the connector’s implementation language. Silo agnostic: it doesn’t matter what data silo (DB, API, etc.) the connector is talking to. Require no dependencies apart from Docker. Setup with a single YAML file. So let’s say you just wrote an Airbyte connector and want to verify it works reasonably well, what does running CATs actually look like, and what guarantees does it give you? First, you need to add a YAML file. This YAML file is autogenerated for the developer when they use the Connector Development Kit (CDK) code generator. The developer only needs to fill the pregenerated YAML file and provide some configuration files. Here’s an example YAML file from the Square source connector, annotated with comments to make it easier to read.

Not counting the comments, that is 26 lines of YAML, which is fairly short.

To run the tests the developer just runs <span class="text-style-code">docker run airbyte/source-acceptance-test –-acceptance-test-config .</span> from the connector module’s root directory, and mount the directory containing your YAML configuration file. For example, if a connector lives in <span class="text-style-code">~/code/airbyte/connectors/salesforce</span>, they would run the command from that directory, assuming that’s where the YAML lives (which is the convention for all Airbyte connectors).

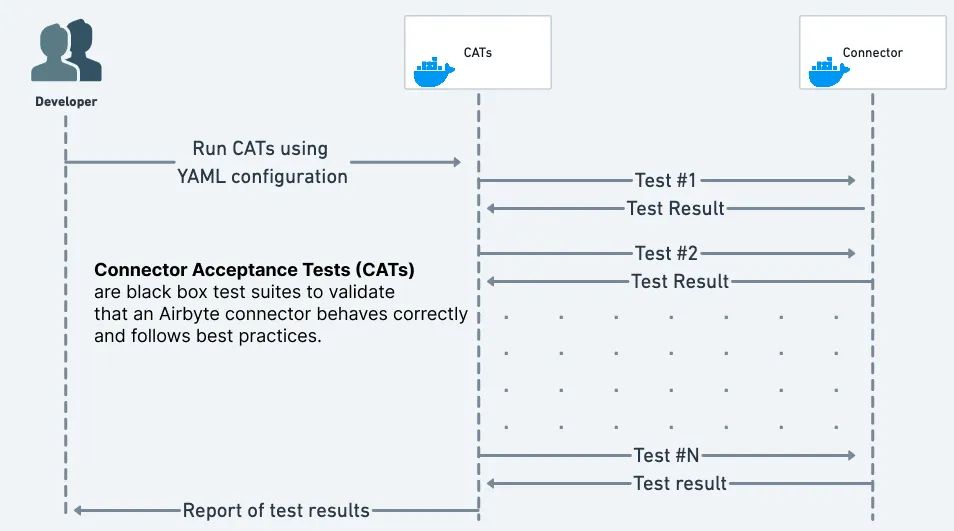

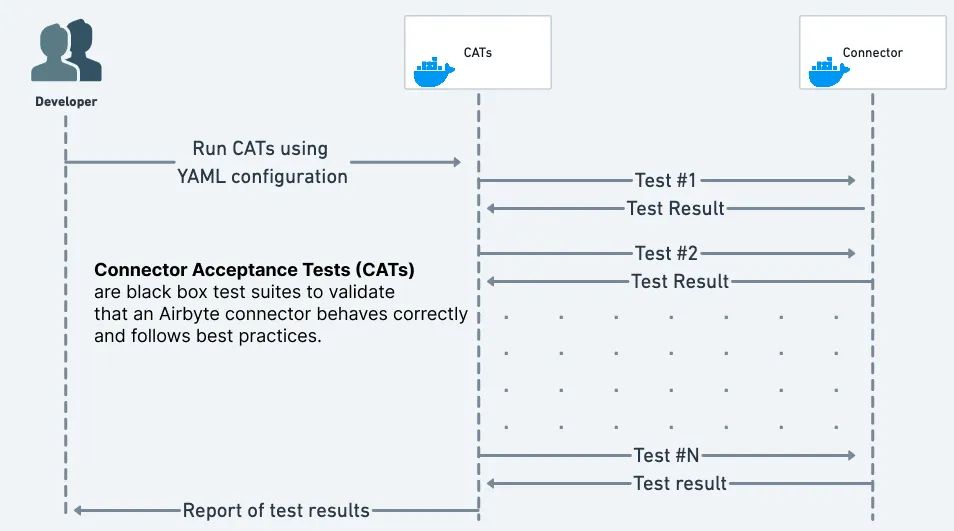

The sequence diagram below visually illustrates what happens when you run the command above. In a nutshell, the CATs look at the configuration YAML provided by the developer, run the relevant tests against the connector image, then output a test report to the developer:

If some tests fail, the appropriate CAT will display an error message explaining what went wrong to tell the developer what they need to fix.

Here are some of the assertions that are run when running CATs for sources, in addition to some of the possible additional verification the developer can configure:

All output from the connector is valid JSON. The connector’s specification and discovered schema only use data types by the Airbyte type system. Inputs described in the connector’s specification all contain “human readable” descriptions and titles for the input parameters. The Spec, connection check, and schema discovery operations run within a “reasonable” time e.g: 5 seconds. The developer can also set custom values for what a “reasonable” runtime is. The connection check operation succeeds when provided valid configs, and fails with a useful error message when the provided configuration is invalid. The connector exports records only from the tables requested by the user. All exported records match the schema described by the schema discovery phase. When running in incremental export mode, only new records are exported. When running in full refresh mode, the results of two full refresh exports are identical. Additionally, for all data export operations, the developer can optionally verify that:

A specific group of records is present in the output of the connector’s data export. They can exclude “volatile” fields (fields whose value changes every time the connector runs) such as “the timestamp of when the record was read” from the comparison. They can specify whether those records are the only set of records output from the connector. They can specify if those records can be read in the same order specified in the test configuration, or if order is unimportant. They can also specify whether those records could contain additional fields/columns not specified in the test configuration. Every column in the output records contains at least one non-null value. This is helpful to verify that the schema declared is absolutely correct, and that the sandbox test account being used for CATs accurately reflects a real world customer production account. As you can see, this is a huge amount of assurance for the developer to get out of the box, all for the small price of 26 lines of YAML!

The limitations of CATs While CATs have been extremely valuable, they also have limitations.

No replacement for unit and integration tests For example, if a connector has a bug which causes it to skip every other page in a paginated REST API response, then that will be hard for CATs to catch. In addition, if a connector’s output must satisfy a connector specific property (e.g: if the sum of two columns must always add up to 42), then that would be hard to express to CATs. Black box testing is not enough to test connector specific behavior, and thus unit tests and integrations tests are further required.

No support for custom multi-stage testing operations Let’s say you want to write a test for the Google Sheets connector which:

Writes some values to a Google Sheet. Runs the connector to export those values. Deletes some values from the Google Sheet. Runs the connector again to verify it correctly read the data without the deleted values. There is currently no way of expressing that kind of test with CATs.

Propagating new test requirements can be a large effort If tomorrow we required that all user-facing operations run in under 1 second, then most connectors would fail that test, and their builds would be broken. We would then need to spend a non trivial amount of time improving the performance of those operations in each connector until they pass those tests and their builds return to green.

We typically deal with this limitation in two ways. First, we try to make improvements in the upstream shared tooling (i.e: the Airbyte Connector Development Kit ) on which most connectors are built. If we can find a way to make this code change once, then a connector developer just needs to upgrade to the latest CDK version to pick up the improved functionality. Then, we make new test cases initially opt-in for a certain grace period to allow developers to catch up to the new requirements, then making them opt-out, and finally removing the ability to opt-out altogether.

Conclusion Overall, we love CATs and have to come to see them as an essential aspect of connector development as they allow to black box test all available connectors while developing, fixing or refactoring them. They have allowed us to develop with very high leverage and quality because they:

Require trivial effort to set up and work with any connector. Provide a great deal of assurance that the connector is well behaved. Make it easy to propagate new learnings across connectors. For example, when we realized that we should ensure user-facing operations run in only a few seconds, we just made one code change in CATs, ran it against all connectors, observed which ones failed, then fixed them. However, this property of CATs does have a downside which we describe in the limitations section.

Overall, CATs are a great example of Airbyte’s values as an engineering and product organization because they allow us to develop high quality products in a high leverage way while providing a great developer experience. We’re excited to continue expanding CATs with new features and test cases, as well as creating new tools that make Airbyte the world’s best platform for developing connectors.

If any of this sounds interesting to you, we’re a very open platform and would love to hear your thoughts! Visit our Github repository or Slack channel to request features and improvements or pick our brains about what you’re up to. And of course, if this sounds exciting enough that you’d be interested in working with us, we’re hiring !