As a data engineer, I always feel less confident about the quality of data I handle than the quality of code I write. Code, at least, I can run it interactively and write tests before deploying to production. Data, I most often have to wait for it to flow through the system and be used to encounter data quality issues. And it's not only the errors that are raised. It's also this feeling that there are more unknown data quality issues than code bugs waiting to be discovered. But, is data quality a more complex problem to solve than code quality?

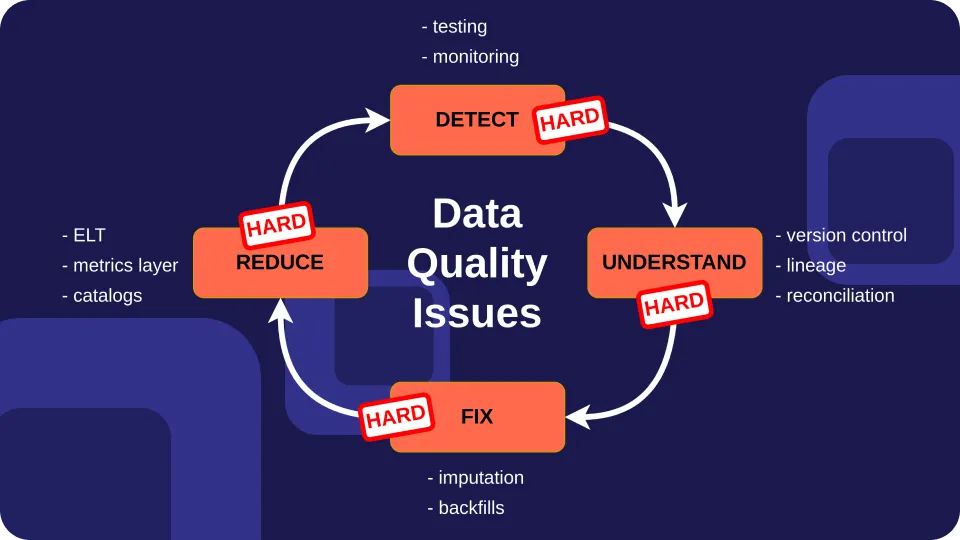

Code quality is the process of ensuring code meets expectations. Likewise, data quality is the process of ensuring data meets expectations . In this article, I want to abstract away from expectations (known as data quality dimensions when talking about data) as these will likely be different based on your usage. Instead, I discuss the different steps to handle data quality issues: detecting, understanding, fixing, and reducing quality issues . Then I argue why I find each one of these steps harder to implement when applied to data than when applied to code.

Why is detecting data quality issues hard? One agreed principle is that the number of data quality issues you encounter will be proportional to the size of your code base and data. Most data repositories will be several orders of magnitude bigger than any code repository. On top of that, you can deploy code up to a few times per day, but data can get updated in milliseconds . With more data changes, there is also a higher chance that you will experience a data quality issue.

There are three main ways to detect a data quality issue:

A business user reports an issue. A data test fails. Data monitoring raises an alert. First, your data needs a communication channel for users to report data issues. Don't underestimate this, or you will end up with more and more SQL code that patches data issues that should be fixed at the source. Then you can start adding tests to catch known data issues and data monitoring to get ahead of unknown data issues.

Testing data vs. testing code Data testing is the process of comparing a data asset to a set of expectations. Data expectations are hard coded as rules and run on production data . On the one hand, code tests run as part of your CI pipeline before updating your production code. It's common among software engineering teams to stage code, test, and validate it before releasing it to production. On the other hand, most data teams today focus their efforts on periodically testing data at rest on databases and warehouses. To have good test coverage in a codebase, software engineering teams may attempt to have a test suite that executes all lines of code, the myth of 100% test code coverage . What does it mean to have good test coverage of your data?

The number of data tests you can potentially write is proportional to the number of columns. You are not expected to write tests for each individual row, but instead, have a few tests for each column. For example, tests can check for missing values, that values fall within a range, or that the column values in one table match the column values in another table. You can use open-source testing libraries like Great Expectations , Soda , or dbt tests to test data.

One way to start your data quality journey is to identify the data models that are more used downstream. Of course, core models are likely to be the ones that will have a higher impact on the perception of users on the quality of your data. However, the ratio at which datasets change makes writing multiple tests for each column impractical, and many teams resort to data monitoring instead.

Monitoring data vs. monitoring code Data monitoring, also known as data observability, is the process of continuously collecting metrics about your data. For example, for each dataset, you can collect data about the number of rows, columns, and values of each. You can also collect metadata about the dataset, such as when it was last updated.

Metrics can be used to automatically create expectations from past data and help you surface unknown data quality issues. For example, if a table historically adds between five and ten thousand new rows every day, and suddenly one day it gets only a hundred new rows, monitoring will raise an alert.

The biggest issue many teams face with monitoring is alert fatigue, as false positives pile up. This issue is shared among cloud monitoring tools used by software engineering teams. On the one hand, cloud monitoring tools like Datadog mostly resort to collecting low-dimensional time-series data such as CPU consumption and API response times. On the other hand, data quality monitoring tools collect multivariate data between columns and tables. As a result, raising alerts from statistically analyzing multiple variables is harder than raising alerts from observing one metric in a time series.

Why is understanding data quality issues hard? When you get alerted by a data issue, your data may have already changed. You may need to check out a previous version of the data to debug the error. Any serious software development team version controls its codebase. Unfortunately, we cannot say the same for data repositories. Version controlling data assets is still a nice to have and hard to do . Data warehouses such as Snowflake and data lakes such as Databricks provide time-travel capabilities. Checking out a previous version of a single dataset is easy; the harder part remains consistently reverting all your affected data and code. With other data versioning tools like lakeFS that provide atomic rollbacks, you can only version data lakes.

Once you have found the data that raised the error, you need to find the source of the error. Unfortunately, navigating through data dependencies is far from trivial. OpenLineage provides a standard for data lineage collection but requires configuring a client for each component of your data stack, if they provide an integration.

After you have found the source of the error, you may want to understand when it was introduced and compare the data to a previous version. To compute differences between data sets, you need to resort to a data reconciliation tool like data-diff (recently open-sourced by Datafold) which integrates with a dozen data storage systems.

Compare all these to version controlling code, exploring code dependencies and visualizing code differences directly through your code editor with git. To me this area of data quality will benefit from consolidation.

Why is fixing data quality issues hard? What do you do after debugging a data quality issue? If the error is created by an intermediary model, then you can fix the code. Otherwise, data quality issues may originate in external systems. This introduces another challenge, data quality issues cannot always be fixed immediately, and in some cases, you won't even get to know the truth . However, even when your code depends on other libraries, most often you have access to the source code to patch it and submit a pull request.

When you catch a code error, you may halt the program and send an error code to the user. You may collect the stack trace and store it somewhere, but most often, you won't be required to re-execute the code that failed . For example, if someone visits your e-commerce website and tries to order an item and the payment fails, you send an error message to the user. When you fix the issue, you may email the user at best, but you won't have to reprocess all failed payments.

When detecting a data quality error, you may quarantine this data. Then, you can try to fix the data manually, use data imputation techniques or modify the code that generated the data error. Then you have to reprocess data from the source to update all downstream dependencies. Being able to reprocess old data (known as backfilling) without side effects depends highly on your availability as a data engineer to make your data processing code functional and idempotent.

"Backfilling is one topic that really shows the difference between a data engineer and a great data engineer. Probably because backfilling requires experience and patience. It's easy to run a pipeline, but when your pipeline should recompute or reingest data from the last 4 years, the stress it'll put on your system will be heavy." — Christophe Blefari on his Data Newsletter. Reducing data quality issues After going through the detect, understand, and fix loop a few times, you may ask yourself how you improve your data architecture to avoid going through this process so often. You can try to detect data quality issues coming from an external source as soon as they are ingested. However, most of your issues will likely be caused by failing systems and erroneous logic.

To improve code quality, you may refactor code to reduce the number of duplicated logic spread across the codebase. Generally, less code also means fewer tests to write and less overhead to navigate the code base. We could argue similarly that the number of data quality issues is proportional to the size of your data . But isn't more data always better?

You may not want to reduce the overall data you are ingesting, but you can reduce the number of intermediary data models you create . One current trend, the data mesh organization, advocates for decentralizing data ownership and democratizing data usage within organizations. This is easier said than done and is more an organizational change than a technological one. Data catalogs like Castor can help make data discoverable across the organization to avoid creating yet another dataset with the same information.

Two other trends to reduce the number of intermediary models are the switch from ETL to ELT and the metrics layer. On the one hand, ELT pipelines move transformations to the right to happen in your data warehouse or lake after you have stored the raw data. In the past, an ETL pipeline was sometimes created to serve a single dashboard (the extreme opposite). On the other hand, the metrics layer moves transformations to the left from BI tools to the data warehouse . But the metrics layer, is only one way to abstract transformation logic. The semantics layer (a broader concept) remains full of open questions.

“A core motivation for a semantic layer is to provide a place to define concepts, incl. (but not limited to) metrics, in a way that does not require you to repeat yourself in the definitions. Modelling semantically involves defining the right building blocks that allow you to define higher-level concepts based on them.” — From Down the semantic rabbit hole by JP Monteiro. Amid evolving trends like the shift from ETL to ELT and the emergence of the metrics layer, ETL testing stands as a critical process ensuring the integrity and accuracy of data transformations, regardless of the chosen approach or architectural nuances.

Here is one last unpopular advice to reduce the volume of intermediary models: ingest and transform data less frequently . You may think that more data, more dashboards, and fresher data are always better, but be aware that all of these bring their own problems, such as increasing alert fatigue when running more data ingestion and processing jobs.

Do you need to ingest data every minute if you only consume data daily? Recently, Benn Stancil coined the term reverse orchestration, where the frequency of ETL/ELT jobs is inferred back from the consumption of the data and SLAs.

“A frequently-updating data ingestion task, for example, might periodically fail and self-correct, all within the bounds of a latency SLA. Alerting people about the failed task teaches us to ignore these warnings, and divorces system problems and internal failures from actual problems that affect whether or not data can be trusted.” —From Down with the DAG by Benn Stencil Making data quality an easier problem to solve As we have seen, data changes faster than code and contains more dependencies, making it easier to introduce new quality issues. You can take some steps to reduce the impact of data quality issues, such as adding data testing and monitoring. Sometimes, this is all you can do to handle data quality issues coming from external sources.

But what can you do to reduce the number of quality issues that come from your logic? First, you can educate data producers to reduce the number of intermediary data models, starting with loading raw data to a central repository and doing most of the transformations there. While this will reduce the number of models, it's not a silver bullet as it will also increase the dependencies between your pipelines and requires a set of best practices.

The data quality ecosystem is less mature than code quality. For example, debugging data quality issues remain the step where data engineers spend the most time. Data engineers need to juggle between different tools to version control data, navigate data dependencies and compare datasets; while you can do all this through your code editor when debugging code.

Finally, data organizations store data over different systems (apps, databases, data lakes, data warehouses) while most code is stored on the same code hosting system (Github). This makes it harder for data quality tools (testing, monitoring, lineage, catalogs) to support integrations for all your systems. To simplify integrating all layers of your data stack, we need more data standards for all three core data areas: data storage, data movement, and data transformation.

At Airbyte, we are working on solving the long tail of integrations problem by providing open-source connectors for moving data . When it comes to data storage systems, open data lake table formats (Delta Lake, Iceberg, Hudi) have the potential to simplify integrations between the different layers of your data stack but still need wider adoption. We are still missing a standard for data transformation. Today, there is little transformation logic shared between companies and this is where most of the data quality issues generate from.

Discuss the article on LinkedIn .