Note: this article is part of a series of article about Airbyte's Postgres connector: Postgres Replication Performance Benchmark: Airbyte vs. Fivetran and Top lessons learned when upgrading Postgres to read from very large tables .

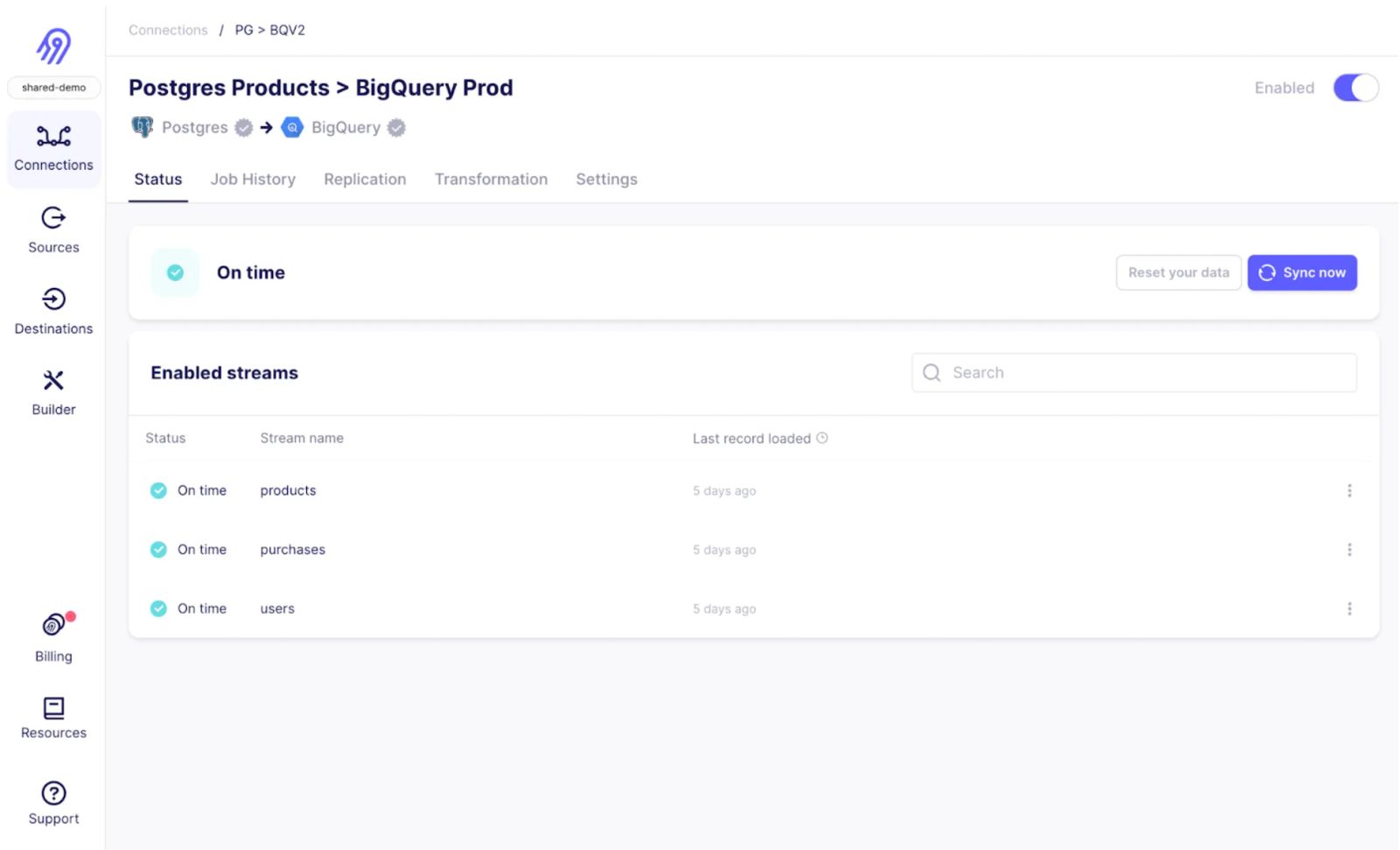

fWe at Airbyte continue to pride ourselves at being the only tool you ever need to move data. As part of that, we are committed to continuously improving the connectors you rely on most. We're excited to share with you a host of improvements to our Postgres database source connector. Here's a digest of how the experience replicating data from Postgres has improved in the last few months:

1. Reliable Large Table Initial Snapshots : Our connector can now replicate datasets of any size. We’ve reduced the reading of data into smaller sub-queries and improved syncs to be resumable after any failure, ensuring that syncs will always pick up where they left off should issues arise.

2. Easy to Use Incremental Updates using xmin : While CDC continues to be the most reliable method of incrementally updating your largest Postgres tables (500 GB+), configuring the WAL log is time consuming and error prone. We can now automatically detect incremental updates to your tables using the xmin system column, which reads data in the natural order of the database for better performance.

3. Unprecedented Throughput : Our Postgres Source connector now boasts throughput up to 11 MB per second (twice higher than most ELT companies), enabling speedier reads of terabytes of data, and improved support for frequently updated tables quickly increasing WAL log size.

Snapshot Terabytes of Data at a Time As you know, the initial backfill of massive Postgres databases is often the most time consuming and data intensive task completed by Airbyte. We want to ensure that this step of your journey is as easy as possible, and resilient against typical interruptions that can interfere with any long running job. As Rodi details in his engineering deep dive , we’ve introduced the following features to ensure that initial Postgres snapshots are always resumable:

Checkpointing for all initial snapshots in any Postgres database : If a sync is ever to be interrupted, Airbyte can resume extracting data where it left off and not have to go back to the beginning. This is accomplished by leveraging how Postgres files are structured under the hood, and a few hidden columns, such as Current Tuple ID (ctid) .Chunking database reads : We now break down table reads into a number of smaller sub-queries which can run independently, called chunks. This reduces the number of failures that arise from network issues or strain on your database.With this, Postgres syncs will always progress towards completion, regardless of any hurdles they may encounter.

Production Ready Incremental Updates with CDC or xmin Following a large initial snapshot, Airbyte keeps your data fresh by incrementally updating the data in your destination data warehouse. Our support for CDC, available since general availability of our Postgres connector , continues to benefit from upgrades behind the scenes - from now using the above method for initial database snapshots, to using more reliable cursors, and more.

As you know, configuring CDC for Postgres can be error prone due to complexities around the WAL log. We previously allowed for users to define their own cursor, such as updated_at or created_at, for incrementally replicating updates to the destination. As Rodi details in his engineering deep dive , this approach required us to rearrange data to detect updates, which is inefficient and fails for tables of medium sizes.

To provide you with an easy to use Postgres connector while ensuring a reliable experience, we’ve introduced a new option for replicating updates using the xmin system column . Xmin replication enables syncing new or updated rows without explicitly choosing a cursor field, and is available in all Postgres databases. Xmin is now the recommended solution for replicating Postgres tables less than 500 GB in size where you don’t need to capture deletes.

Explore New Airbyte Functionality Our improved Postgres connector now provides you with a more flexible and reliable experience replicating data from Postgres databases. But in parallel, the team has iterated on many other table stakes features in the past few months:

Automatically propagating new schemas without data resets Selecting columns to exclude prior to replicating Improved documentation for hosted versions of Postgres, such as Cloud SQL for Postgres If you are interested in getting a deeper dive of how these features work under the hood, check out Rodi’s engineering blog post here . To get started, use the latest version of Airbyte’s source-postgres .

Stay tuned for more information on these releases in the coming months. As always, you can consult our public roadmap for more detail on what’s coming next! To learn, checkout this amazing article about Postgres Replication .