In our relentless pursuit to offer robust and speedy data replication , we are thrilled to announce significant upgrades and the certification of our MongoDB source connector. This revamped connector is now equipped with a Change Data Capture (CDC) mode, a turbocharged replication speed, and a sharper schema discovery performance, ensuring a seamless data synchronization experience.

Supercharged Sync Speed: The enhanced MongoDB connector significantly boosts replication speed over 10 MB per second, ensuring that your data migrations are completed swiftly, reducing downtime and enabling quicker data availability for your analytics.Support for large collections : The connector can now replicate collections of any size (1 TB+). We’ve reduced the reading of data into smaller sub-queries and improved syncs to be resumable after any failure, ensuring that syncs will always pick up where they left off should issues arise.Change Data Capture (CDC): The latest iteration introduces a robust CDC mode, allowing for incremental syncs with minimal performance impact to your source database. By capturing and replicating data changes promptly, the CDC mode ensures that your data reflections are always current, enhancing your data-driven decision-making process.Performant schema discovery : Schema discovery is about understanding the structure of your data — the various fields and nesting of your data, then mapping these to types for smooth replication with Airbyte. Our updated MongoDB connector performs better in this aspect, making the process simpler and more performant.Deep Dive into our Sync Speed Improvements NOTE: At the end of the article, we’ve shared more details on the dataset and connector versions we used to benchmark the following improvements.

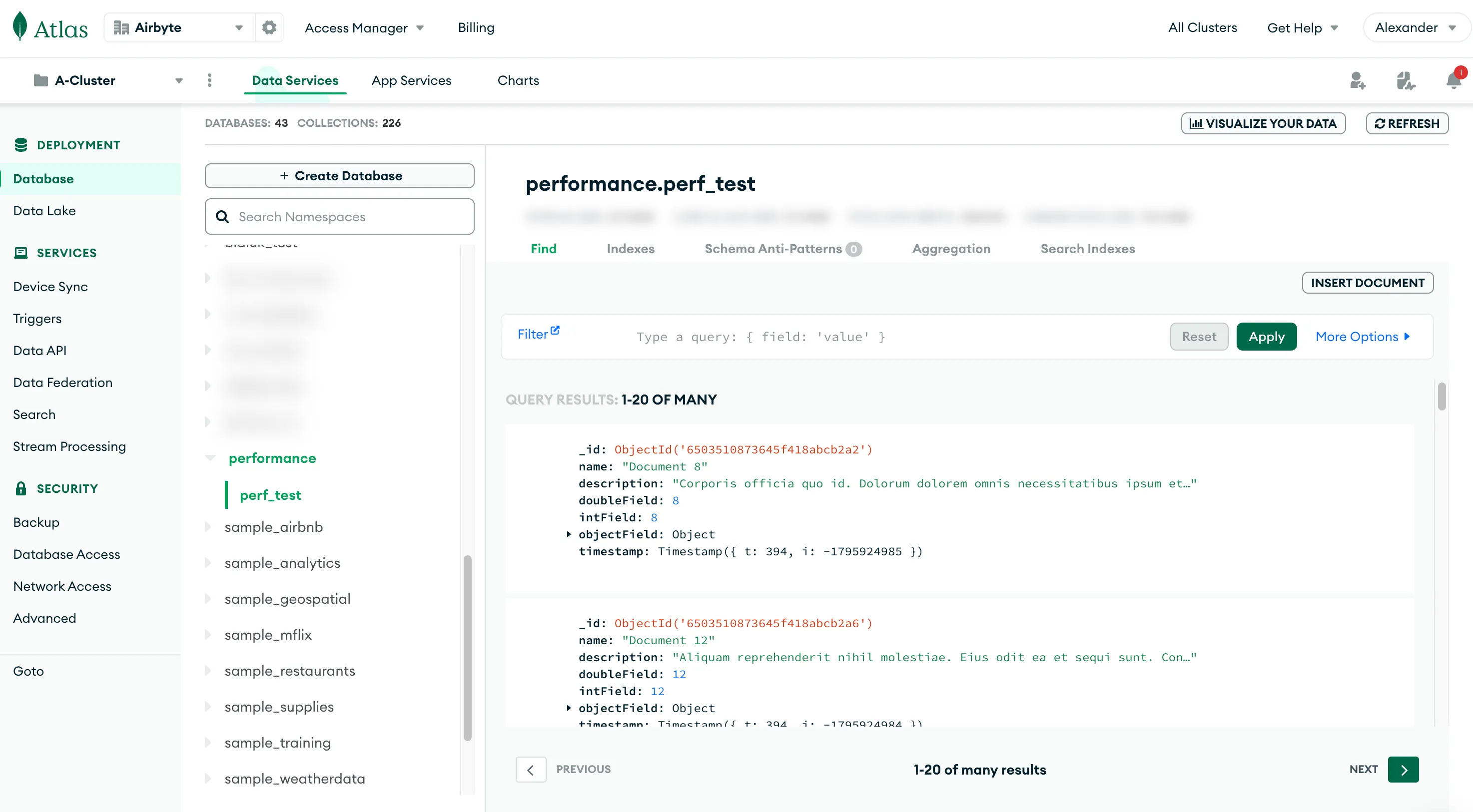

Replicating a 36 million document dataset from MongoDB to Snowflake with the new version of our mongo-db source connector resulted in an effective replication rate just above 10 MB / s . We moved over 77 GB in just over 2 hours. This speed corresponds to a significant throughput improvement relative to the previous version of the connector. The main factors contributing to increased speed are:

We’ve improved the query used during the initial snapshot to use a predictable cursor (_id). We now defer the serialized representation of a document, saving us time which improves throughput. The previous connector used a “Document” object, which pre-deserializes all of the data even if not accessed - e.g. after selecting a subset of fields to be synced. We switched to “BsonDocument” which deserializes each field only when read from the object. Easily Sync Terabyte-Sized Collections As you may be aware, the initial backfill of substantial collections often represents the most time-intensive and data-heavy task undertaken by Airbyte. Our aim is to simplify this phase of your journey, making it resilient against common disruptions that could impede any long-standing job. Much like the enhancements made for Postgres and MySQL, we've rolled out the following features to ensure that initial MongoDB snapshots are consistently resumable:

Checkpointing for all initial snapshots in any MongoDB database: In case a sync faces an interruption, Airbyte now has the capability to pick up right where it left off, eliminating the need to start from scratch. This is achieved by harnessing the underlying file structure of MongoDB.Chunking database reads: Table reads are now segmented into smaller, independent sub-queries, referred to as chunks. This approach minimizes the likelihood of failures stemming from network glitches or database stress. With these improvements, MongoDB syncs are designed to steadily advance towards completion, irrespective of any challenges that may arise. Combined with the above speed improvements, Airbyte is better suited than ever to sync any collection in your database.

Production Ready Incremental Updates with CDC Upon completion of the initial load, Airbyte transitions to a CDC -based mode for executing incremental syncs. CDC brings a wealth of advantages over alternative sync methods, notably its capacity to track modifications to both data and database schema, all while exerting minimal extra load on the database itself.

As for other databases, we built logic in the MongoDB connector to automatically switch away from checkpointing by primary key after the initial snapshot. The primary key load is useful only for the initial sync. After that point, we need to transition to another method to only sync data which has changed. Airbyte taps into the change streams feature of a MongoDB replica set to incrementally capture inserts, updates, and deletes via a replication plugin. Use of change streams is partly what allows for incremental syncs to now be significantly faster than the previous MongoDB connector, as events are now pushed to Airbyte.

The transition between the initial snapshot and the following incremental syncs is facilitated using the resume token from the Oplog .

A potential avenue for enhancement lies in capturing changes from the change stream during the initial sync. This adjustment can avert a data re-sync scenario, especially in cases where an initial sync for a notably large MongoDB dataset could be time-consuming, and possibly surpass the space boundaries of the Oplog configured in your MongoDB instance.

Looking Forward: Mixed Collections & Faster Connectors We’ve also improved the performance of schema discovery - we automatically sample a (configurable) number of records, which is then used for determining the schema of your data, and which fields are available to sync to Airbyte. This approach scales particularly well to large collections, but can be at times unpredictable for collections with documents containing many mixed, ever-changing fields. This continues to be an area of improvement for us to look at addressing in future releases of this connector.

Looking forward, we recognize the potential combining the significant performance gains outlined above with improved support for mixed collections. One opportunity would be for us to offer the option to replicate all of the fields of your collection to a single ‘data’ blob in the destination, and skip schema discovery entirely. On the speed front, replicating multiple data streams simultaneously is another huge opportunity for further performance improvements.

In parallel to these MongoDB improvements, the team has iterated on many other table stakes features in the past few months:

Automatically propagating new schemas without data resets Selecting columns to exclude prior to replicating Typing & deduping to more reliably load data into your destinations To stay tuned for more updates in the future on this topic, as always, you can consult our public roadmap for more detail on what’s coming next. Our ambition is to make Airbyte’s MongoDB source connector the best in the industry. To get started with our MongoDB connector, check out our documentation to learn more .

Appendix: Reference Dataset The benchmarking was conducted with the latest versions of the MongoDB to Snowflake connectors, which you can find in our changelogs in our documentation. At the time of writing, the newest MongoDB connector version was 1.0.1, and Snowflake is on version 3.2.3.

To generate the dataset, you can run the following command within the Airbyte repository to run this script :

./gradlew :airbyte-integrations:connectors:source-mongodb-v2:generateTestData -PconnectionString={your connection string} -PdatabaseName={your database} -PcollectionName={your collection} -Pusername=airbyte_user -PnumberOfDocuments=36025630 This command will prompt you for the corresponding instance password. You can select any number of documents depending on the size of the test you are looking to run:

Limitless data movement with free Alpha and Beta connectors

Introducing: our Free Connector Program ->