Data Deduplication: Maximizing Storage Efficiency and Data Integrity

✨ AI Generated Summary

Data deduplication eliminates redundant data to reduce storage costs, improve data quality, and enhance analytics performance by identifying and removing duplicate copies within datasets. Key benefits include:

- Cost savings through optimized storage and faster backup/recovery processes

- Improved data integrity, compliance, and reduced security risks via advanced encryption methods

- Enhanced efficiency using AI-driven techniques for accurate duplicate detection and real-time processing

- Flexible implementation options such as file-, block-, or byte-level deduplication and inline or post-process timing

- Challenges include processing overhead, risk of data loss, and the need for strong governance and compliance frameworks

Platforms like Airbyte integrate deduplication into data pipelines, offering scalable, monitored, and customizable solutions to maintain data quality and operational efficiency.

Data deduplication eliminates redundant data, conserving storage and streamlining operations. For modern data teams, duplicate data creates performance bottlenecks, generates compliance challenges, and transforms straightforward business intelligence into unreliable analytics that undermine strategic decision-making.

This data reduction technique addresses the exponential growth of data by identifying and removing duplicate copies within datasets. It reduces storage costs, optimizes data transfers, enhances data quality, and speeds up analytics and processing—making it essential for data-driven organizations navigating an increasingly competitive landscape.

In this article, we'll explain the need for data deduplication, the processes involved, different methods used, and the benefits it provides.

What Is Data Duplication and Why Does It Occur?

Data duplication, or data redundancy, refers to multiple copies of data within a database, system, or organization. This redundancy can occur for various reasons, including human error, using legacy systems, poorly managed data integration and data transfer tasks, and inconsistent data standards.

Organizations struggle with data quality issues, with duplicate data being a primary contributor to operational inefficiencies. The challenge becomes particularly acute in enterprise environments where data originates from multiple sources, each with different naming conventions, formatting standards, and data entry practices.

What Problems Does Duplicate Data Create?

Duplicate data can lead to several challenges that impact both technical operations and business outcomes:

- Inaccurate Information: Duplicate data can lead to inconsistencies and inaccuracies in databases. It becomes challenging to determine which copy of the data is the correct or most up-to-date version.

- Data Quality: Data quality is compromised, leading to errors in reports and analytics. It can erode trust in the data and affect decision-making.

- Increased Storage Costs: Storing redundant data consumes additional storage resources, which can be expensive. This is especially problematic in organizations dealing with large datasets where storage costs can scale unpredictably.

- Wasted Time and Resources: Identifying and resolving duplicates and ensuring data integrity can be time-consuming and resource-intensive, with investigation teams spending valuable time validating and cross-referencing data.

- Confusion and Miscommunication: Redundant data can cause confusion as multiple users may refer to different copies of the same data. This leads to miscommunication and errors, particularly when customer service representatives access incomplete customer records.

- Compliance and Security Risks: Duplicate data can increase the risk of data breaches and compliance violations. Inaccurate or inconsistent data can lead to regulatory issues and data privacy concerns, especially under regulations like GDPR where organizations must demonstrate complete control over personal data.

- Complex Data Analysis: Analyzing data with duplicates can be more complicated, as it may require deduplication efforts before meaningful insights can be drawn. Machine learning models trained on datasets containing duplicates suffer from reduced accuracy and biased algorithms.

How Does Duplicate Data Impact Business Operations?

The business impact extends beyond technical considerations to affect customer relationship management, where duplicate records create fragmented customer experiences and inconsistent service delivery. Sales teams working with customer relationship management systems containing duplicate records must alter standard processes to include manual duplicate checks, risking customer relationship damage when representatives engage prospects without complete context.

To prevent these issues from occurring, data teams use data deduplication. By eliminating duplicate data, organizations can optimize storage resources and improve overall efficiency.

What Are the Fundamental Principles of Data Deduplication?

Data deduplication is a process used to reduce data redundancy by identifying and eliminating duplicate copies of data within a dataset or storage system. It is commonly used in data storage and backup systems to optimize storage capacity and improve data management.

Data deduplication works by identifying and eliminating duplicate data through techniques such as hashing, indexing, and comparison, ensuring that only unique data is stored. These techniques ensure that a storage or backup system contains only one unique copy of a dataset.

Modern deduplication systems have evolved beyond simple duplicate elimination to incorporate sophisticated algorithms that can handle complex data relationships and variations. Advanced implementations utilize multiple matching techniques simultaneously, including exact matching for strong identifiers like email addresses and customer IDs, alongside fuzzy matching algorithms that can identify variations in names, addresses, and other textual data.

How Does the Data Deduplication Process Work?

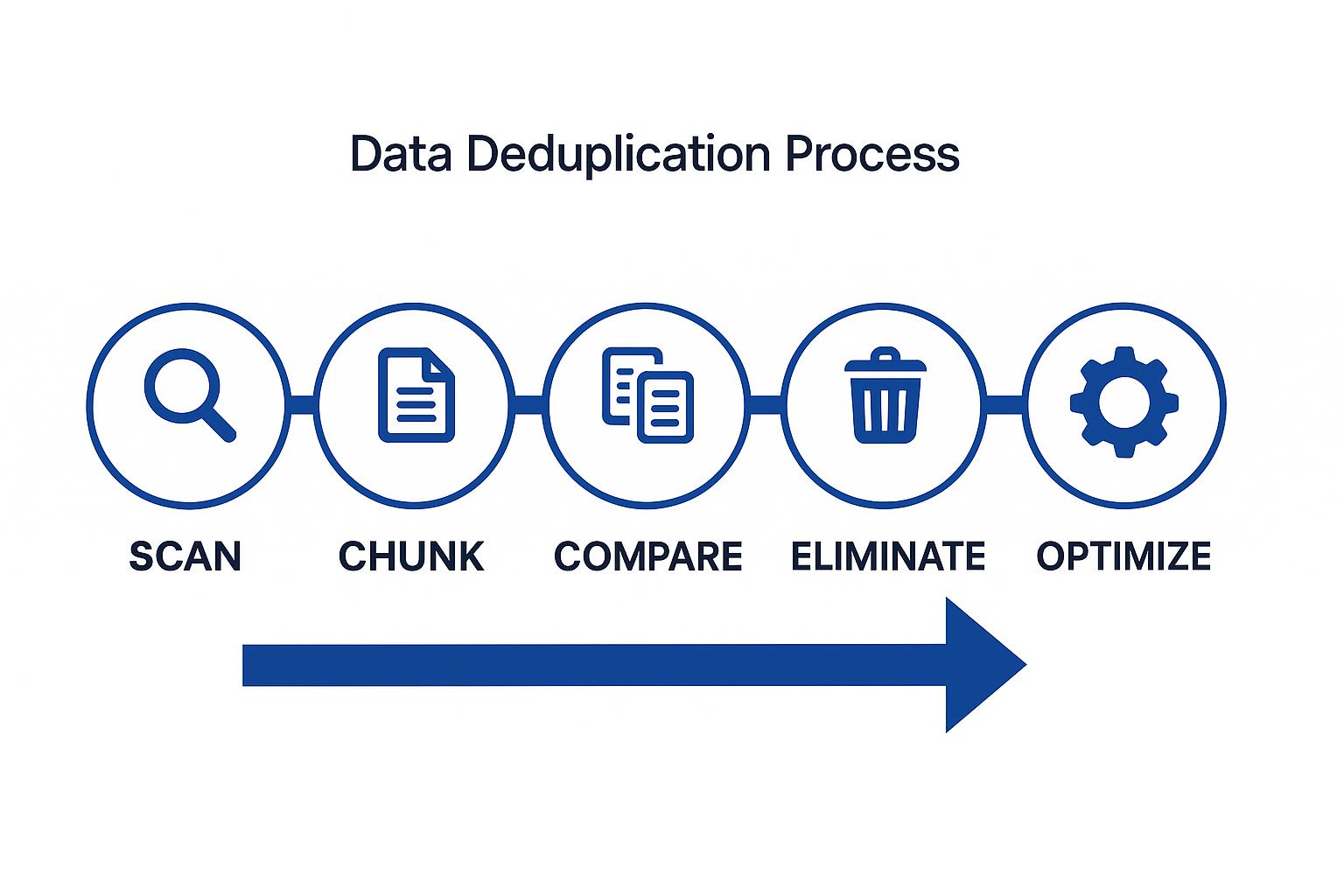

The deduplication process follows a systematic approach that ensures accurate identification and elimination of redundant data:

- Identification: The process begins by scanning the dataset to detect duplicates using sophisticated pattern recognition and algorithmic analysis.

- Data Chunking: The dataset is divided into fixed or variable-sized data chunks. Each data block is hashed to generate a unique identifier (often referred to as a hash or fingerprint).

- Comparison: The system compares these identifiers using advanced algorithms that can detect both exact matches and near-duplicates with high precision. Modern systems employ machine learning models that can recognize complex patterns and variations.

- Elimination: Once duplicated data is identified, copies are eliminated through intelligent consolidation processes. Only one copy of each unique data chunk is retained, and pointers reference the retained copy while preserving data lineage.

- Indexing: An index is maintained to track which data chunks are retained and how they are linked to the original data, ensuring complete traceability and audit capabilities.

- Optimization: Periodically, the deduplication software may optimize storage by re-evaluating the dataset and removing newly identified duplicates, with some systems providing real-time continuous optimization.

How Does Deduplication Compare to Compression?

While data deduplication and data compression share the goal of reducing storage space requirements, they are distinct processes with different mechanisms and applications.

Deduplication focuses on identifying and eliminating redundant copies of identical data chunks within or across datasets. Compression encodes redundant patterns within individual files to reduce their size.

Combining the two techniques—typically deduplication first, compression second—can yield even greater space savings.

What Are the Different Types of Data Deduplication?

Deduplication can be implemented at different levels, depending on the granularity of the data chunks and the specific requirements of the storage environment.

File-Level Deduplication

File-level deduplication (single-instance storage) removes duplicate files by comparing entire files as single units using cryptographic hashes. It works well for document management, email archiving, and backups where whole files are repeatedly copied.

- Pros: Low overhead, simple to implement.

- Cons: Cannot detect partial duplicates—any single-byte difference means no match.

Block-Level Deduplication

Block-level deduplication divides data into fixed or variable-sized blocks (chunks) and stores only unique blocks, pointing all duplicates to the retained block.

- Pros: Finds partial duplicates and shared content across files, delivering higher savings.

- Cons: More metadata, higher processing overhead; effectiveness depends on block size and chunking algorithm.

Byte-Level Deduplication

Byte-level deduplication detects duplicate sequences of bytes within files or blocks, offering the greatest granularity and theoretical savings.

- Pros: Maximal space reduction.

- Cons: Extremely compute-intensive and memory-hungry; practical only for specialized workloads.

What Methods Determine When Deduplication Occurs?

The timing of deduplication operations can significantly impact system performance and storage efficiency, leading to two primary approaches.

Inline Deduplication

Deduplication happens in real time as data is written. Only unique chunks are ever stored, saving space immediately but adding processing overhead to write operations.

This approach provides immediate storage benefits but may impact write performance during peak operations.

Post-Process Deduplication

Data is written in full, then scanned and deduplicated later (often during off-peak hours). Write performance is unaffected, but temporary extra storage is required until the job runs.

Organizations typically choose this method when write performance is critical and additional storage capacity is available for temporary redundancy.

What Benefits Does Data Deduplication Provide?

Data deduplication offers numerous advantages that extend beyond simple storage savings to impact overall system performance and business operations.

Cost Savings and Efficient Storage Utilization

Storage reduction ratios are common in backup scenarios, lowering hardware, power, and cloud costs. Organizations can achieve significant cost savings by reducing their physical storage requirements and associated infrastructure costs.

Faster Backup and Recovery Processes

Less data to move means shorter backup windows, lower bandwidth use, and quicker restores. This improvement directly impacts business continuity and disaster recovery capabilities.

Enhanced Data Integrity and Reduced Redundancy

A single authoritative copy improves governance, data quality, and compliance efforts. Organizations benefit from simplified data management and reduced risk of inconsistencies across multiple data copies.

Optimized Bandwidth Usage

Eliminating duplicates decreases network traffic, benefiting distributed or cloud environments. This optimization is particularly valuable for organizations with multiple locations or hybrid cloud architectures.

Efficient Data Retention and Archiving

Long-term retention becomes affordable; archived data—often highly redundant—shrinks dramatically. Organizations can extend their data retention periods without proportional increases in storage costs.

Support for Scalability and Long-Term Growth

Organizations can scale data volumes without linearly scaling storage budgets. This scalability enables sustainable growth and supports expanding data analytics initiatives.

How Do AI-Driven Systems Enhance Data Deduplication?

Modern deduplication technologies leverage artificial intelligence and machine learning to improve accuracy and efficiency beyond traditional rule-based approaches.

How Do AI-Powered Deduplication Systems Work?

Machine-learning models now automate duplicate detection and continually improve accuracy, spotting complex or fuzzy matches that traditional rule-based systems miss. Solutions like Deduplix, Tilores, and WinPure exemplify this evolution.

These systems can recognize patterns and relationships that would be impossible to detect through manual rules or simple algorithmic approaches.

What Advanced Technologies Enable Modern Deduplication?

Several cutting-edge technologies are transforming how organizations approach data deduplication:

- GPU Acceleration: Massive, parallel duplicate matching capabilities that can process large datasets efficiently.

- Localized Algorithms: Language-aware algorithms designed for global datasets with diverse linguistic patterns and cultural variations.

- Real-Time Processing: In-memory processing capabilities for high-throughput environments that require immediate deduplication.

What Modern Tools and Platforms Are Available?

The deduplication landscape includes several specialized platforms that address different organizational needs:

- Deduplix provides AI-driven, high-speed, in-memory deduplication capabilities for organizations processing large volumes of data.

- Tilores offers an identity-resolution approach that connects and retains rather than deletes, preserving valuable data relationships.

- WinPure delivers visual, rule-based matching accessible to non-technical users who need to implement deduplication without extensive technical expertise.

How Do Modern Deduplication Systems Address Security Challenges?

Traditional encryption clashes with deduplication because identical plaintext encrypted with different keys produces different ciphertext. Modern systems employ advanced encryption techniques to resolve this challenge:

- Convergent Encryption: Key derived from file content so identical files encrypt identically, enabling deduplication while maintaining security.

- Message-Locked Encryption: Extends convergent encryption with stronger key-server models and rate limiting to prevent unauthorized access patterns.

What Governance Frameworks Support Deduplication?

Strong data ownership, stewardship roles, retention schedules, access controls, and audit trails are essential to maintain compliance and accountability after duplicates are consolidated.

Organizations must establish clear protocols for data lineage tracking and maintain comprehensive documentation of deduplication decisions and processes.

How Do Compliance Requirements Impact Deduplication?

Regulatory compliance adds layers of complexity to deduplication implementations:

- HIPAA Compliance: Safeguards for protected health information must be maintained throughout the deduplication lifecycle, with particular attention to audit trails and access controls.

- GDPR and CCPA Requirements: Right to erasure, consent, and portability must be preserved even after records are merged, requiring sophisticated tracking and reversal capabilities.

What Challenges and Limitations Should You Consider?

While data deduplication provides significant benefits, organizations must carefully evaluate potential drawbacks and implementation challenges.

Overhead Costs and Increased Processing Time

Inline processing, AI models, and metadata management can slow ingest operations and fragment data, impacting I/O performance. Organizations must balance deduplication benefits against performance requirements.

Resource-intensive deduplication processes may require additional computing capacity and can create bottlenecks during peak data processing periods.

The Risk of Data Loss

Hash collisions, interrupted processes, or corrupted source blocks can propagate errors throughout the system. Rigorous backup and validation strategies are mandatory to prevent data loss.

Organizations must implement comprehensive testing and recovery procedures to ensure data integrity throughout the deduplication process.

Choosing the Right Deduplication Strategy

Selecting file-, block-, or byte-level approaches—and deciding between inline vs. post-process—depends on workload, performance, and compliance needs.

The optimal strategy varies based on data characteristics, system architecture, and business requirements. Organizations benefit from thorough analysis before implementation.

How Does Airbyte Support Data Deduplication?

Airbyte provides comprehensive deduplication capabilities integrated into its data integration platform, supporting organizations with over 600 connectors and enterprise-grade features.

Advanced Data Transformation and Processing

Built-in transformations (ELT or ETL) that remove duplicates during extraction or loading, with support for custom matching logic and ML models. These capabilities enable organizations to implement deduplication as part of their standard data pipeline processes.

Comprehensive Pipeline Management

Configurable pipelines and incremental extraction reduce duplicate introduction and ensure consistent deduplication across sources and destinations. This approach prevents duplicates at the source rather than requiring cleanup after integration.

Enterprise-Grade Monitoring and Observability

Metrics and logging integrations track deduplication performance and data quality in real time. Organizations can monitor the effectiveness of their deduplication strategies and make adjustments based on performance data.

Scalable Integration Architecture

Cloud-native scaling and an extensive connector library enable high-volume, deduplicated data movement to warehouses and lakes. This architecture supports growing data volumes while maintaining deduplication efficiency.

Conclusion

Data deduplication is foundational to modern data management, enabling organizations to reduce costs, improve performance, and enhance data quality. By understanding its principles, selecting the right techniques, and integrating AI-driven, secure, and well-governed solutions, organizations can achieve optimized storage and reliable data integrity. These capabilities ultimately enable faster, more reliable insights that drive better business decisions.

FAQ

What is the difference between data deduplication and data compression?

Data deduplication removes duplicate data chunks across datasets, while data compression reduces file size by encoding redundant patterns within files. Deduplication targets dataset-level redundancy; compression targets file-level. Using both together maximizes storage efficiency.

When should I choose inline versus post-process deduplication?

Use inline deduplication for immediate storage savings, accepting some write-speed impact. Choose post-process deduplication when write performance is critical, using extra temporary storage and deduplicating later. Inline saves instantly; post-process preserves ingestion speed but delays space efficiency.

How does AI improve data deduplication accuracy?

AI improves deduplication by detecting exact and near-duplicates using machine learning and NLP. It recognizes variations in names, addresses, and text, learns from patterns over time, and handles complex relationships, achieving higher accuracy than rule-based systems.

What are the main security considerations for data deduplication?

Key security considerations include using convergent encryption so identical data can be deduplicated securely, maintaining strict access controls, preserving audit trails, and tracking data lineage to ensure compliance and prevent unauthorized access during the deduplication process.

How can I measure the success of my data deduplication implementation?

Measure deduplication success using storage reduction ratios, faster backups, lower bandwidth usage, and improved data quality. Monitor processing time, system performance, compliance, and data integrity to ensure deduplication delivers efficiency without compromising governance or security.

.webp)