Businesses across industries are grappling with the challenges of managing, processing, and extracting value from ever-growing volumes of data. ETL tools play a critical role in this data journey, enabling companies to collect data from diverse sources, transform it into a standardized format, and load it into target systems for analysis and decision-making.

Amazon Web Services (AWS) has developed a comprehensive suite of ETL tools designed to meet the diverse needs of businesses operating in the cloud.

In this article, we will explore the landscape of AWS ETL tools in 2026, examining both native AWS services and third-party solutions that seamlessly integrate with the AWS ecosystem.

What is an AWS ETL Tool?

AWS provides a suite of ETL tools that help businesses efficiently process and move data between various data sources, both on-premises and in the cloud. These tools include AWS Glue, AWS Data Pipeline, and Amazon Kinesis. Each service has its own strengths and use cases, but they all aim to simplify and streamline the process of extracting data from sources, transforming it into a usable format, and loading it into target storage systems or analytics tools.

Here is a comparison of Top AWS ETL tools:

AWS ETL tools that seamlessly integrate with the AWS ecosystem

1. AWS Glue

AWS Glue, formerly known as AWS Stitch, is a serverless, fully managed ETL platform that simplifies the process of preparing your data for analytics. It streamlines the process of transferring data between data repositories. All it takes are a few clicks in the AWS Management Console to create and run an ETL job.

AWS Glue comprises an AWS Glue Data Catalog containing central metadata, an ETL engine that automatically generates Python or Scala code, and a flexible scheduler that handles job monitoring, dependency resolution, and retries.

After you configure AWS Glue to point to your data stored in AWS, it automatically discovers your data, infers schemas, and stores the related metadata in the AWS Glue Data Catalog. Once this is done, your data is available for ETL and can also be searched and queried immediately.

Suitable For: AWS Glue is a suitable choice if your organization already uses the AWS ecosystem and you’re looking for a managed ETL service that simplifies the data preparation and loading process.

Key Features of AWS Glue

- GUI Support: AWS Glue has a GUI that allows you to build no-code data transformation pipelines. This will benefit those who don’t have a fair knowledge of Python or PySpark to easily design a basic Glue job from scratch. As you start designing your job in the GUI, Glue automatically generates PySpark code in the background.

- Integration with other AWS Services: As an AWS-native service, AWS Glue offers better integration with other services within the ecosystem, such as Amazon Redshift, S3, RDS, etc.

- Scalability: With petabyte-scaling capabilities, AWS Glue automatically scales the resources based on the workload. This allows it to manage varying data loads efficiently.

- Glue Data Catalog: This is a centralized metadata repository that stores metadata about your data sources, transformations, and targets, making it easy to find and access data.

- Automated Schema Discovery: Glue automatically discovers and catalogs metadata of your data sources. It creates ETL jobs to transform, normalize, and categorize data.

- AWS Glue Crawlers: These help establish a connection to your source or target data store. AWS Glue crawlers navigate a predefined set of classifiers to identify the data schema and generate metadata in your AWS Glue Data Catalog.

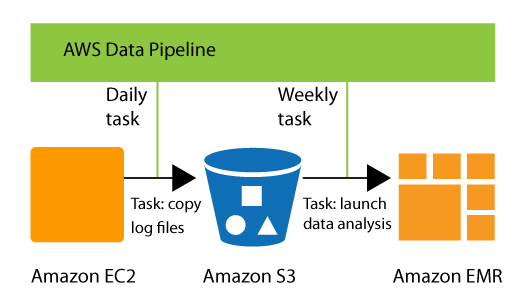

2. AWS Data Pipeline

AWS Data Pipeline, one of the most reliable AWS ETL tools, is a web service that helps you move data between different AWS storage and compute services and on-premises data sources at specified intervals. This tool automates the ETL process: it specifies all your ETL jobs, schedules them to run at a specified time and date, and manages their execution across AWS services.

A simple UI allows drag-and-drop of different source and target nodes onto a canvas. It also lets you define connection attributes, thus helping create ETL data pipelines. AWS Data Pipeline allows you to access your data where it’s stored regularly, transform it, and process it as needed. Then, you can efficiently transfer the resulting data to AWS services such as Amazon RDS, Amazon S3, Amazon DynamoDB, and Amazon EMR.

You can use preconditions and activities that AWS provides or write custom ones. This allows you to execute SQL queries directly against databases, run Amazon EMR jobs, or execute custom applications running on Amazon EC2 or in your own data center.

Suitable For: If your business requires regular data movement and processing across various AWS services, AWS Data Pipeline is a suitable choice.

Key Features of AWS Data Pipeline

- Flexible Scheduling: You can schedule your data processing jobs to run at specific intervals. This helps handle dependencies in the data and ensure that tasks are executed in the right order.

- Integration with AWS Services: Seamless integration with other AWS services allows smooth data transfer and processing across different AWS resources.

- Improved Reliability: The distributed, highly-available infrastructure of AWS Data Pipeline is designed for fault-tolerant execution of your activities. If a task fails, it can automatically retry the task. This helps ensure the integrity of your data processing workflows.

- Monitoring and Alerts: If a failure in your activity logic or data sources persists despite retries, AWS Data Pipeline will send you failure notifications via Amazon Simple Notification Services. The notifications can be configured for successful runs, failures, or delays in planned activities.

- Resource Management: AWS Data Pipeline eliminates the need to manually ensure resource availability. It can automatically manage the underlying resources required for data processing tasks.

- Prebuilt Templates: AWS Data Pipeline provides a library of pipeline templates, making it simpler to create pipelines for more complex use cases. Examples of such use cases include regularly processing your log files, archiving data to Amazon S3, or running periodic SQL queries.

3. AWS Kinesis

AWS Kinesis is a fully managed AWS service that offers real-time data processing of large-scale streaming data. It consists of three components: Kinesis Data Streams, Kinesis Data Firehose, and Kinesis Video Streams.

Kinesis Data Streams can collect and process huge streams of data records in real-time. You can process and analyze the data as soon as it is available and immediately respond to these events.

AWS Kinesis is widely used for real-time analytics, IoT data processing, and creating real-time applications for app monitoring, fraud detection, and live leaderboards.

Suitable For: AWS Kinesis is a good choice if your business needs to process large streams of real-time data with minimal latency. It’s particularly beneficial for a wide range of real-time data processing applications.

Key Features of AWS Kinesis

- Kinesis Data Firehose: This is an ETL service that reliably captures, transforms, and delivers streaming data to data lakes, data stores, and analytics services. By loading streaming data into Amazon S3 or Amazon Redshift, you can achieve near real-time analytics.

- Kinesis Data Streams: This is a serverless streaming data service that makes it easier to capture, process, and store data streams at any scale. Data Streams synchronously replicates your streaming data across three Availability Zones in the AWS Region. It stores the data for up to 365 days, providing multiple layers of data loss protection.

- Kinesis Data Analytics: This allows you to process and analyze streaming data using standard SQL, making it easier to feed real-time dashboards and create real-time metrics.

- Scalability: AWS Kinesis is a highly scalable service. It can handle high volumes of streaming data without requiring any infrastructure management.

- Integration with Other AWS Services: Kinesis can seamlessly work with other AWS services like Amazon Redshift, Amazon S3, and Amazon DynamoDB.

4. Airbyte

Airbyte is a popular data integration platform and a suitable alternative to the AWS ETL tools. While the AWS ETL tools are highly optimized for moving and transforming data primarily within the AWS ecosystem, Airbyte can help you synchronize data from various sources to data warehouses, lakes, and databases.

You can use the expansive catalog of connectors Airbyte offers to load data to and from AWS effortlessly. There are Airbyte connectors for Amazon Redshift, Amazon S3, Amazon DynamoDB, AWS Data Lake, Kinesis, and S3 Glue, among others. Apart from these, you can also set up ETL pipelines to seamlessly integrate data to or from non-AWS sources, catering to diverse data integration needs.

You can deploy Airbyte in the cloud (on platforms like AWS) or on-premises. This offers your organization flexibility based on your infrastructure preferences.

Suitable For: Airbyte is particularly beneficial if you want a customizable, scalable, and easy-to-navigate data integration solution.

Key Features of Airbyte

- Readily-available Connectors: Airbyte offers about 600+ connectors for different databases, SaaS applications, data warehouses, and other data sources. Among these are several AWS service-based connectors that allow seamless integration of data.

- Custom Connector Development: If the connector you require isn’t available, you can use Airbyte’s no-code Connector Builder to build your own connector in only ten minutes. Additionally, the AI Assistant enhances this process by automatically pre-filling fields based on the API documentation you provide. This AI assist also provides intelligent suggestions, helping you fine-tune your connector’s configuration.

- GenAI Workflows: Airbyte streamlines the process of preparing unstructured data for use with large language models (LLMs) by automatically chunking the data and loading it into vector databases, such as Pinecone or Weaviate. This makes it easier to leverage your data for generative AI use cases.

- Scalability: Airbyte is designed to be highly scalable and can handle large volumes of data. It offers horizontal scalability through its microservices-based architecture, enabling seamless scaling by adding or removing Docker containers or Kubernetes pods.

- Automatic Schema Change Detection: You can configure Airbyte to detect the schema changes occurring at the source and automatically propagate the changes to the destination. This feature helps maintain data consistency and integrity across systems, with checks scheduled every 15 minutes for Cloud users and every 24 hours for Self-Managed users. You can also manually refresh the schema at any time.

- Incremental and Full-Refresh Sync: Airbyte supports both incremental and full-refresh synchronization. This provides flexibility in how to update and maintain data in target systems.

- PyAirbyte: PyAirbyte is an open-source library that enables you to use Airbyte connectors in Python. Using PyAirbyte, you can extract data from various sources and load it into caches like DuckDB, Snowflake, Postgres, and BigQuery. This cached data is compatible with Python libraries, such as Pandas, and robust AI frameworks like LangChain and LlamaIndex, facilitating the development of LLM-powered applications.

- Monitoring and Logging: You can use Airbyte’s detailed monitoring and logging capabilities to track your data integration process. This allows you to identify and troubleshoot issues and ensure data integrity.

- Multitenancy & Role-Based Access: With the Self-Managed Enterprise edition, you can manage multiple teams and projects within a single Airbyte deployment. Furthermore, you can utilize the role-based access control mechanism to ensure data security during this process.

5. Talend

Talend is a reliable substitute for AWS native ETL tools like AWS Glue. Transferring data from one data repository to another or to other cloud environments using Talend is relatively simple. Talend is ideal for organizations that require a robust ETL service that is compatible with both AWS and other platforms. It has extensive connectivity and customization.

Key Features of Talend

- Metadata Management: Talend provides an enterprise-class metadata repository to be used for information storage about sources, transformations, and targets of data sources.

- Data Quality Checks: Talend has integrated data quality options that allow for the identification of quality problems in the data and fixing them. This would provide high-quality data for accurate and reliable analytics.

6. Apache NiFi

Apache NiFi is a reliable, open-source tool for data ingestion and processing that enables the transfer of data between multiple systems. Originally built for managing data transfers between software applications, NiFi has an easy-to-use interface for building, viewing, and managing data pipelines. It is very easy to use and allows for the creation of complex processes and tasks through a drag-and-drop feature.

Key Features of Apache NiFi

- Integrations: NiFi provides services for integration with several databases, cloud services, and IoT devices. It has an extensive set of processors and connectors that will help in every corner, thereby ensuring smooth data flow among different systems.

- Flow-Based Programming: NiFi uses a flow-based programming model that enables users to define data flows visually. This technique simplifies the development of complex data pipelines and improves the manageability of data integration tasks.

- Data Provenance: NiFi provides top-of-the-line data provenance capabilities, allowing for tracing where the data has originated and how it has moved through the data flow. This feature is key to data governance and auditing.

7. Informatica PowerCenter

Informatica PowerCenter is a complete ETL solution that is highly flexible and can easily be scaled up to meet the needs of large organizations. PowerCenter is capable of moving data between different repository platforms and services in AWS, thus making data management and analysis efficient.

Key Features

- Data Quality and Governance: PowerCenter has built-in tools for data quality and governance to ensure that the data is accurate and meets the standard regulations.

- Real-Time Data Processing: PowerCenter supports real-time data integration so businesses can process and analyze data as it is generated. This feature is very important in cases where applications require the latest information.

- High Availability and Recovery: PowerCenter provides high availability and disaster-recovery options to ensure the continuity of data integration processes. This would further offer strong fault tolerance and nearly zero downtown.

8. Matillion

Matillion is a flexible cloud-based ETL tool that helps to organize data before analysis. It stands out in data transfer between data stores and is highly compatible with AWS and other cloud services, making it a more reliable substitute for native AWS ETL tools like AWS Glue. In Matillion, creating and running ETL jobs are easy to do with the help of a graphical interface.

Key Features

- Cloud Integration: Matillion is optimized for cloud data warehouses like Amazon Redshift, Google BigQuery, and Snowflake. Its deep integration with these platforms ensures seamless data movement and transformation within the cloud environment.

- Automation and Scheduling: Matillion includes strong features on automation and scheduling, which enable the user to orchestrate and automate ETL jobs, greatly smoothing out data workflows by reducing manual intervention.

- Data Transformation: Matillion allows a wide array of data transformation tasks for users, from cleaning and normalization to enrichment. Its transformation capabilities also enable quality data for analytics and reporting.

9. Stitch

Stitch is a serverless, fully managed ETL solution for preparing data for analysis. Stitch allows you to configure and execute ETL operations with ease in a graphical user interface.

Key Features

- Integrations: With over 100 different sources supported—databases, SaaS applications, and cloud services—Stitch provides broad connectivity for the smooth integration of data between disparate environments.

- Automated Data Replication: Stitch replicates data from many sources into one central location in a data warehouse. This lets you be sure that your data is current and ready for analysis.

- Data transformations: Stitch also allows one to define data transformations using very straightforward SQL queries, thus cleaning, normalizing, and enriching data before loading to the destination quite easily.

10. Fivetran

Fivetran is useful for companies that want to transfer data between different platforms like AWS and need a managed ETL tool that automates the process of preparing and loading data.

Key Features

- Pre-built Connectors: Fivetran supports a very rich library of pre-built connectors to widely used cloud applications, databases, and SaaS tools. This means that you will not have to build and maintain a custom code base for each one of your disparate data sources.

- Schema Management: Fivetran automatically detects and adjusts source changes to ensure the pipeline is working efficiently and correctly.

- Secure by default: Fivetran puts data security at the top of its list by implementing strong encryption methodologies and access controls to protect your data throughout the integration procedure.

11. Pentaho Data Integration

Pentaho Data Integration (PDI) is an open-source ETL tool that provides a more efficient way of transferring and transforming data from one data repository to another. Through the graphical user interface of PDI, users can design and execute ETL jobs without a lot of stress.

Key Features

- Open-Source and Customizable: Being an open-source platform ensures that developers have complete flexibility for any kind of data transformation to suit specific needs.

- Visual Designer: PDI comes with a drag-and-drop visual designer that enables the construction of data pipelines without requiring advanced technical knowledge.

- Extensive Library of Transformation Steps: PDI provides large numbers of transformation steps for data manipulation, cleaning, and normalization.

- Community-Driven Support: This open-source nature brings in an extensive and active community with extensive documentation, tutorials, and support in forums.

- Hybrid and On-Premise Deployments: While not cloud-native, PDI does offer flexibility in on-premise or hybrid environments to customers with special data security or regulatory requirements.

Limitations of Native AWS ETL tools

Limited source connectors: The native AWS ETL has limited source connectors, which are pre-built. The limitation exposes its capability to integrate data from various sources to a limit. Therefore, extra development is required to have more connectors added.

Customization: Customization can be limited at times. This could be a huge drawback for businesses with special data processing needs, as tools may not help in running complex transformation logic or handling specific data needs.

Cost: The pricing model can become quite complex and can end up costing much more than expected, especially when it involves high-scale data processing tasks. The cost could surge with an increase in the quantity of data being exchanged and usage.

Vendor lock-in: It is a common outcome of using AWS ETL tools since the business relies on AWS service providers. Due to the difficulty in migrating to other platforms or integrating with other systems, as well as the costs involved, flexibility and future scalability are constrained

Learning Curve and Complexity: AWS Glue, although an effective tool, can be very complex at times. Users should possess good knowledge about the AWS ecosystem, ETL processes, and potentially script programming in Python or Scala.

Factors to Consider for Choosing an AWS ETL Tool

Now that you’ve seen the best AWS ETL tools, here’s a list of factors to consider when choosing the most suitable one for your organizational needs:

- Data Sources and Destinations: Ensure the tool supports the required data sources (databases, file formats, APIs, etc.) and destinations (data warehouses, data lakes, etc.) for seamless integrations.

- Real-Time Processing vs. Batch Processing: Determine whether you need batch data processing or real-time processing (streaming ETL). AWS Kinesis is designed for real-time data streaming, while others may be suitable for batch processing.

- Scalability: The size and scale of the data can also impact the choice of tool. Assess how well the tool can handle increasing data volumes and complexity. It’s essential to consider the scalability limits to ensure the tool can adapt to your data needs without requiring extra charges for smaller workloads.

- Cost: Evaluate the pricing models of the different tools. While some have fixed pricing models, others may offer pay-as-you-go pricing. Based on your specific data volumes and processing requirements, it’s crucial to understand the cost implications.

- Security and Compliance: If your organization handles sensitive data, ensure the tool meets certain security and compliance requirements. Features such as encryption, access control, and audit trails are essential.

- Support: Look into the level of support the provider offers, including comprehensive documentation, customer service, and community forums. This can be valuable for troubleshooting and optimizing your ETL processes.

Conclusion

The availability of diverse AWS ETL tools caters to organizations’ data integration and transformation needs. From Airbyte’s extensive connector catalog and AWS Glue’s serverless architecture to AWS Data Pipeline’s flexible scheduling and AWS Kinesis’ real-time data streaming, each AWS ETL tool has its own benefits.

When selecting the right tool for your organization, you must consider factors such as supported data sources and destinations, processing needs, scalability, cost, security, and support offered. The choice of an ETL tool—a crucial decision—can significantly impact the effectiveness of your data management and analytics strategies.

Common FAQs about AWS ETL Tools

What are ETL tools, and why are they crucial for data management?

ETL tools are essential for Extracting, Transforming, and Loading data from various sources into a usable format for analysis and decision-making. They ensure data accuracy, consistency, and accessibility, driving effective data management strategies.

What advantages does AWS offer for ETL tasks?

AWS provides scalable, flexible, and reliable cloud-based infrastructure for ETL tasks. Its suite of ETL services, including AWS Glue, AWS Data Pipeline, and Amazon Redshift, streamline the data processing workflow. AWS's pay-as-you-go pricing model ensures cost-effectiveness, making it an attractive option for organizations of all sizes.

Does AWS have an ETL tool?

Yes, AWS offers several services that facilitate ETL (Extract, Transform, Load) processes, including AWS Glue, AWS Data Pipeline, and others. These services enable users to extract data from various sources, transform it as needed, and load it into AWS data storage or analytics services.

Is AWS Redshift an ETL tool?

While AWS Redshift is primarily a data warehousing service, it does offer some ETL capabilities. Redshift allows users to load and transform data during the data loading process, but it is not considered a standalone ETL tool.

Is S3 an ETL tool?

Amazon S3 (Simple Storage Service) is an object storage service and is not an ETL tool itself. However, S3 is commonly used as a storage destination in ETL pipelines for storing raw or processed data. It provides scalable and durable storage for data used in ETL processes.

Suggested Reads:

What should you do next?

Hope you enjoyed the reading. Here are the 3 ways we can help you in your data journey:

.webp)