Is your trusty Skyvia data integration solution starting to feel cramped? Whether you’re facing limitations in functionality and scalability or simply seeking a fresh perspective, venturing beyond Skyvia can unlock new possibilities for your data movement processes. In this dynamic data terrain of 2024, many powerful alternatives await, each promising a smooth and effective journey.

But wait, before you jump ship! Choosing the right substitute requires navigating a sea of options, each with strengths and quirks. This guide dives headfirst into top tools, empowering you to identify the perfect match for your unique data integration needs. Let’s explore the top 6 Skyvia alternative options you can use in 2024.

Skyvia Overview

Skyvia, powered by Devart, is a cloud-based data integration platform that provides a range of services to help you manage your data efficiently. You can perform various integration processes such as ETL, ELT, and Reverse ETL to extract your data from diverse sources and load it to a centralized repository. Beyond integration capabilities, this universal SaaS (Software as a Service) data platform also offers data backup, synchronization, and management solutions.

Skyvia offers a variety of features:

- Catalog of Connectors: It has more than 160 pre-built connectors that allow you to integrate data from multiple platforms seamlessly. However, if you can’t find a connector of your choice, you can always create a new connector with its Connectors SDK.

- Data Replication: With Skyvia, you can leverage data replication capabilities, enabling you to identify and replicate changes from the source file into the target system.

- Schedule Backups: Skyvia has excellent backup and restore functionality to keep your data safe. It allows you to perform manual or scheduled data backups, ensuring critical information protection against data loss.

Top 6 Skyvia Alternatives 2026

Here are the noteworthy 6 Skyvia alternatives, each catering to unique requirements.

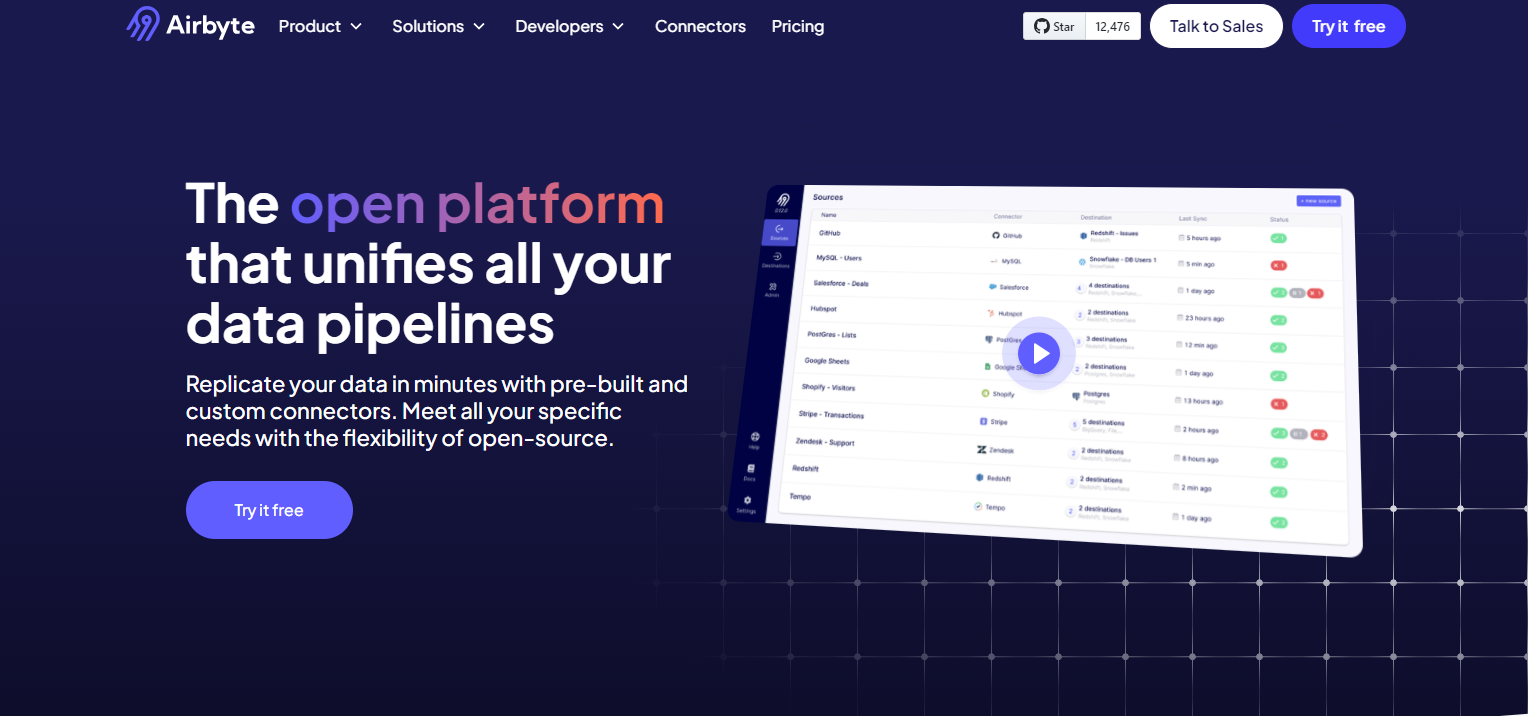

Airbyte

Airbyte is a robust data integration platform designed to simplify and streamline the process of collecting and loading data from multiple sources into a centralized data warehouse or data lake. It supports ingesting different types of structured and unstructured data from various sources. With its user-friendly interface and extensive feature set, Airbyte empowers you to efficiently manage your data integration workflows and derive actionable insights from diverse datasets.

Here’s a list of Airbyte’s key aspects:

- Low-code/No-code Interface: Airbyte’s no-code connectors enable you to connect to various data sources without writing any code. These connectors simplify the process of setting up data pipelines, allowing you to configure data replication processes between different systems through a user-friendly interface.

- Change Data Capture: By capturing only the incremental changes since the last replication, the Airbyte CDC technique eliminates the burden of migrating the entire dataset. This, in return, saves network bandwidth usage and resources as well as results in efficient data synchronization.

- PyAirbyte: It is an open-source Airbyte Python library that simplifies the utilization of multiple Airbyte connectors with code for data integration tasks. This helps you quickly build custom data pipelines without heavily relying on Docker and Kubernetes.

- Modern Cloud-Native Design: Airbyte's cloud-native architecture makes it well-suited for modern data environments. It allows you to seamlessly integrate with cloud-based data storage and processing solutions, offering more scalability and agility.

- AI-enabled Data Warehouses: You can power your Gen AI applications by leveraging Airbyte’s Snowflake Cortex destination. This native vector support allows you to store vector embeddings in Snowflake directly.

- RAG Transformation: Airbyte provides you support for boosting your LLM-generated outcomes. You can easily integrate them with popular LLM frameworks, like LangChain and LlamaIndex, and conduct RAG transformations to fine-tune your models.

- Security Certifications: Its adherence to security best practices is evidenced by its ISO 27001, GDPR, HIPAA, and SOC 2 certifications. Additionally, data in transit is encrypted by TLS (Transport Layer Security), and ASE-256-bit encryption secures the metadata while it is at rest.

Pricing

Airbyte offers four versions—Open Source, Cloud, Team, and Self-Managed Enterprise solution, with a pricing structure tailored to each. The Open-source version is freely available and maintained by its active community. The Cloud operates on a pay-as-you-go model, allowing you to pay only for what you sync. For more information, visit the Airbyte pricing page.

Advantages of Airbyte Over Skyvia

Discover the distinct features that make Airbyte a compelling alternative:

- Massive Connector Library: Airbyte provides you with a platform where you can seamlessly integrate data from various databases, APIs, data warehouses, or SaaS applications. Compared to Skyvia, Airbyte offers an extensive catalog consisting of 400+ pre-built connectors. This makes it flexible for ingesting data from diverse sources.

- Custom Connector Development: Unlike Skyvia, which only gives you limited access to building customer connectors. Airbyte allows you to create custom connectors using its Connectors Development Kit (CDK) in just a few hours. This, in turn, will provide more flexibility to integrate with diverse data sources and destinations compared to Skyvia.

- AI-powered Connector Builder: Together with Fractional AI, Airbyte has developed an AI-powered assistant for its Connector Builder feature. This feature automates the process of building custom connectors and provides suggestions to fine-tune your data pipeline.

- Developer-friendly Interfaces: With Airbyte, you can build your data pipelines in four convenient ways—UI, API, PyAirbyte, and Terraform Provider. Opt for the UI if you prefer a graphical approach. If you’re inclined towards programming, the API and Terraform Provider are your go-to choices. If you are looking to build LLM applications or integrate with AI frameworks, you can use PyAirbyte.

- Community Collaboration: Airbyte has a vibrant data and AI community comprising 900+ data engineers actively engaged with the platform. This community collaboration leads to rapid improvements, bug fixes, and support for users at all levels of expertise.

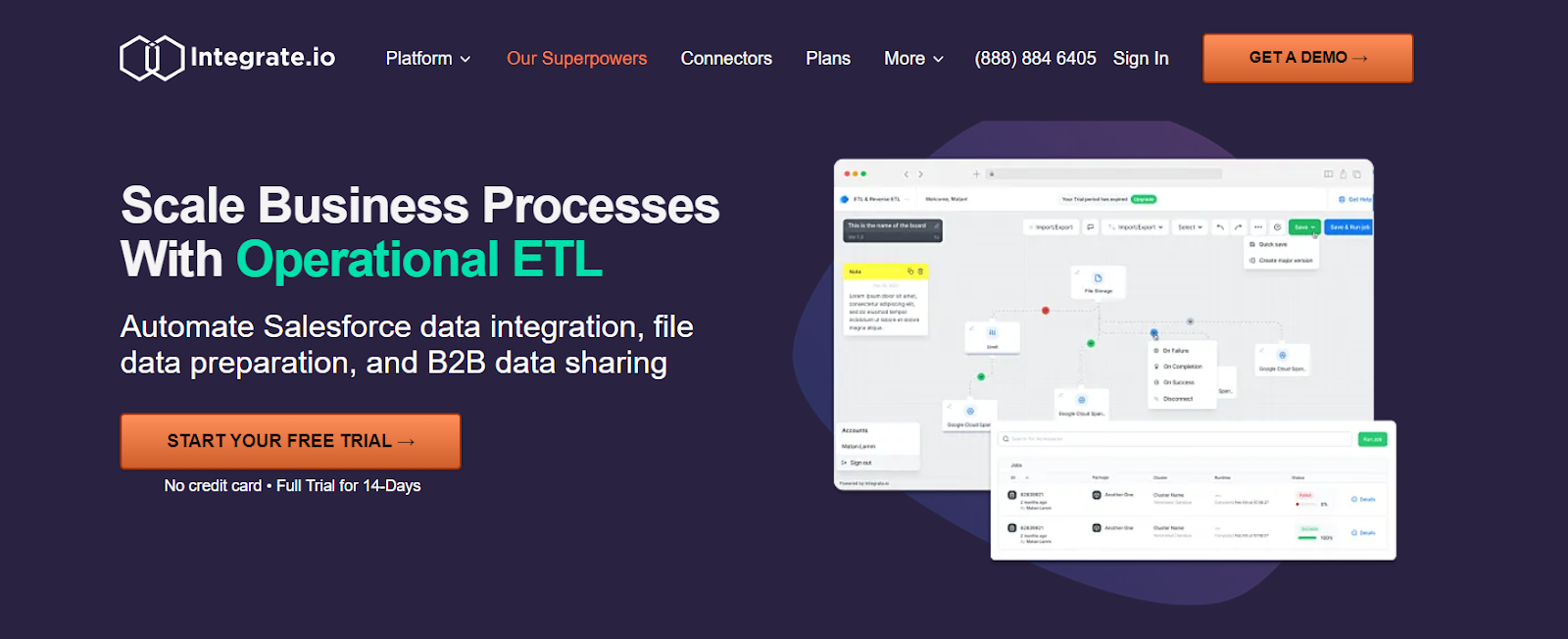

Integrate.io

Integrate.io is a cloud-based data integration platform that helps your business automate data consolidation, processing, and preparation for various purposes, primarily analytics and BI. It offers a no-code/low-code environment, making it easier for you to work with data pipelines. Moreover, you can also leverage its data replication feature, which allows you to identify and capture changes from the source file and replicate them in the destination system.

Key aspects of Integration.io:

- Using Integrate.io, you can manipulate data within the pipeline before it is loaded into the destination database. This capability not only reduces computing expenses but also accelerates processing speed, particularly beneficial when managing extensive datasets.

- Integrate.io provides features for mapping data fields between different systems, ensuring accurate data transformation.

- It offers comprehensive monitoring and logging functionalities for tracking the status and performance of the data integration process.

Pricing

Integrate.io offers three pricing plans, each catering to varying needs and requirements. The Starter plan is free, while the Professional version extends functionality, such as supporting frequently running data pipelines. In addition, the Enterprise version provides a comprehensive and customized solution for growing data needs.

Informatica

Informatica is a data integration platform that provides a wide range of solutions to help you manage and integrate your data. It offers a comprehensive suite of data integration connectors that enable you to extract, transform, and load data from various sources into target systems. In addition to data integration, it offers advanced features for data quality management, data governance, and data security, ensuring your data is accurate and reliable.

Features of Informatica are:

- Informatica allows you to prepare and transform data without heavy reliance on IT, promoting self-service data preparation and analysis.

- Its master data management (MDM) capabilities enable you to create and maintain a single, trusted view of its master data. This improves data governance and ensures consistency across your organization.

- With Informatica, you can protect your sensitive data from external threats using various security measures it provides. These measures include access controls, encryption, data masking, and database credentials management.

Pricing

Informatica pricing can vary widely depending on factors such as specific solutions being utilized. For more details on their pricing plans, you can get in touch with Informatica sales teams.

Suggested Read: Informatica Competitors

Stitch

Stitch, a cloud-based data integration tool, simplifies data movement from over 140 sources into various cloud data warehouses or databases. It offers a user-friendly drag-and-drop interface and pre-built connectors to help you quickly set up and manage data pipelines without the need for infrastructure setup.

Features of Stitch are:

- As a part of the Talend ecosystem, Stitch seamlessly integrates with other Talend services, allowing you to leverage additional data management features as needed.

- Stitch Data provides orchestration functionalities, empowering you to govern your data pipelines comprehensively throughout the data replication process.

- It prioritizes data security and compliance, implementing encryption and other measures to protect sensitive information during integration.

Pricing

Stitch offers three pricing versions—Standard, Advanced, and Premium. The Standard version starts at $100 per month with a two-month free trial. The advanced plan starts at $1,250 per month, with more control and flexibility of pipelines. The Premium version costs $2,500 per month with robust security and compliance features.

Hevo Data

Hevo Data is a cloud-based data integration platform designed to simplify the process of collecting, transforming, and loading data from various sources into a data warehouse or destination of choice. With its extensive connectors, automation capabilities, and user-friendly interface, it allows you to streamline data integration workflows of all sizes.

Features of Hevo Data comprise:

- Hevo’s visual data mapping feature allows you to intuitively map data fields between sources and destinations, making data flow configuration clear and easy to understand.

- Its cloud-based architecture allows for on-demand scaling to accommodate changing data volumes and processing needs. This flexibility ensures smooth performance during data surges.

- Hevo Data supports real-time replication, enabling you to capture and process data in near real-time.

Pricing

The Hevo Data provides four pricing models—Free, Starter, Professional, and Business Critical. The Free plan is freely available. The Starter version costs $239 monthly, and the Professional plan is $679 monthly. Lastly, its business-critical version has customized pricing and is recommended if you have enormous datasets.

Suggested Read: Hevo Alternatives

Apache NiFi

Apache NiFi is an open-source data integration tool that is designed to facilitate the flow of data between disparate systems. It provides a user-friendly interface for designing, managing, and monitoring data flow. NiFi is particularly useful for handling large volumes of data with low latency and high throughput. It supports horizontal scalability and can be deployed in clustered configurations to ensure fault tolerance.

Keys features of Apache NiFi include:

- Its ability to process data in real time allows you to respond quickly to changes in your data, making it beneficial for IoT data collection, log processing, stream analytics, etc.

- NiFi provides robust security features such as authentication, authorization, encryption, and data provenance tracking to protect sensitive information.

Pricing

Apache NiFi is a free and open-source data processing software that allows you to easily handle large volumes of data with minimum effort.

Conclusion

Exploring alternative options to Skyvia in 2024 unveils a diverse landscape of data integration solutions. The top 6 competitors discussed showcase varying features, providing your business with ample choices to tailor its data integration strategies. As technology evolves, staying informed about these alternatives ensures that your organization can adapt to changing needs and harness the most effective tools for seamless data management.

Suggested Read: Matillion Competitors

What should you do next?

Hope you enjoyed the reading. Here are the 3 ways we can help you in your data journey: