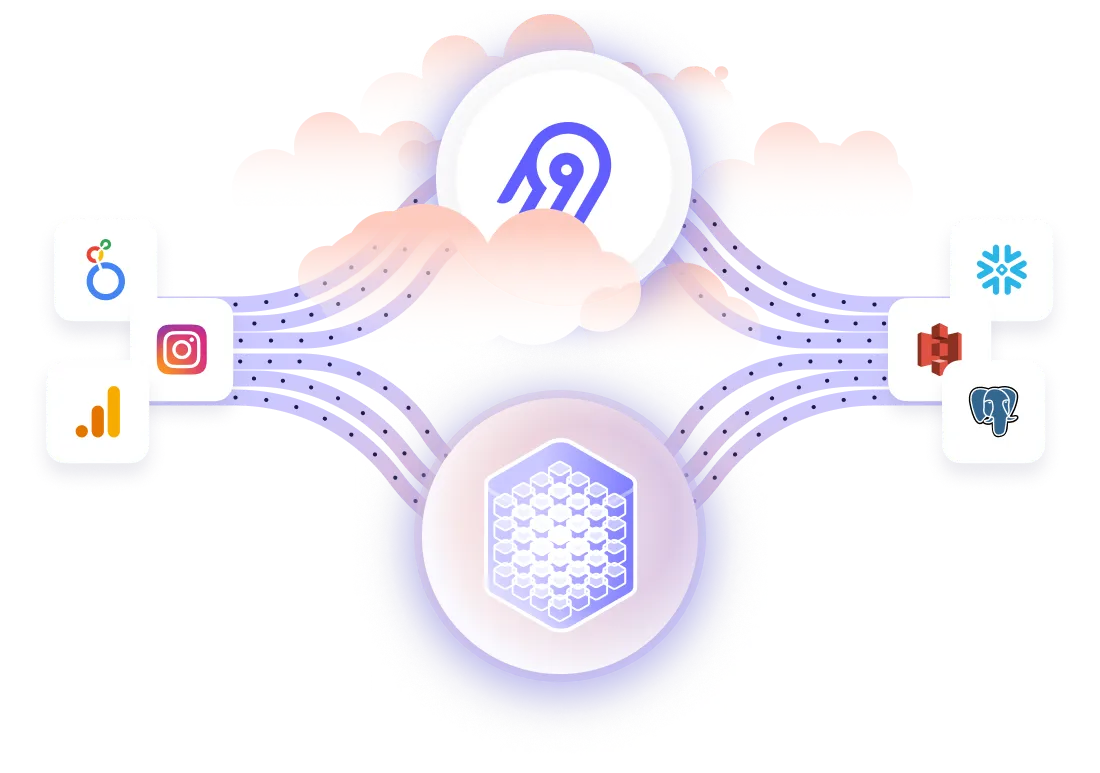

Top companies trust Airbyte to centralize their Data

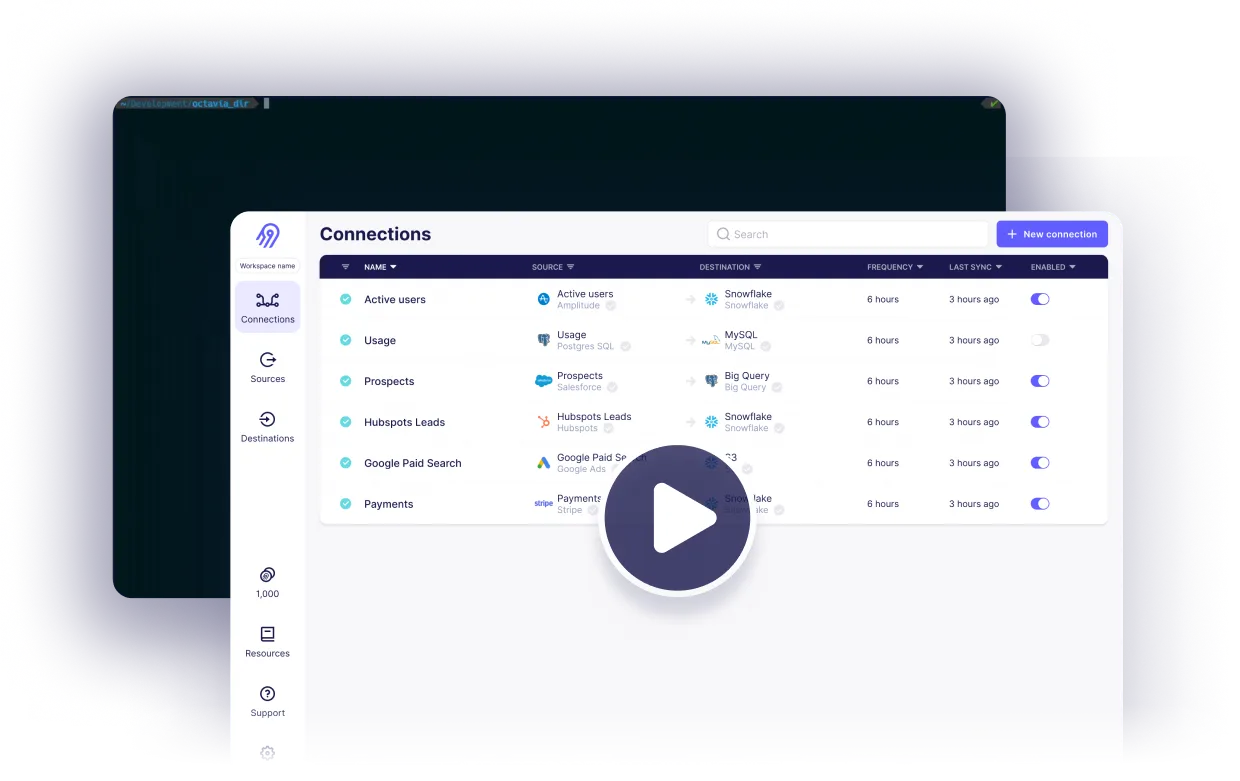

Sync your Data

Ship more quickly with the only solution that fits ALL your needs.

As your tools and edge cases grow, you deserve an extensible and open ELT solution that eliminates the time you spend on building and maintaining data pipelines

Leverage the largest catalog of connectors

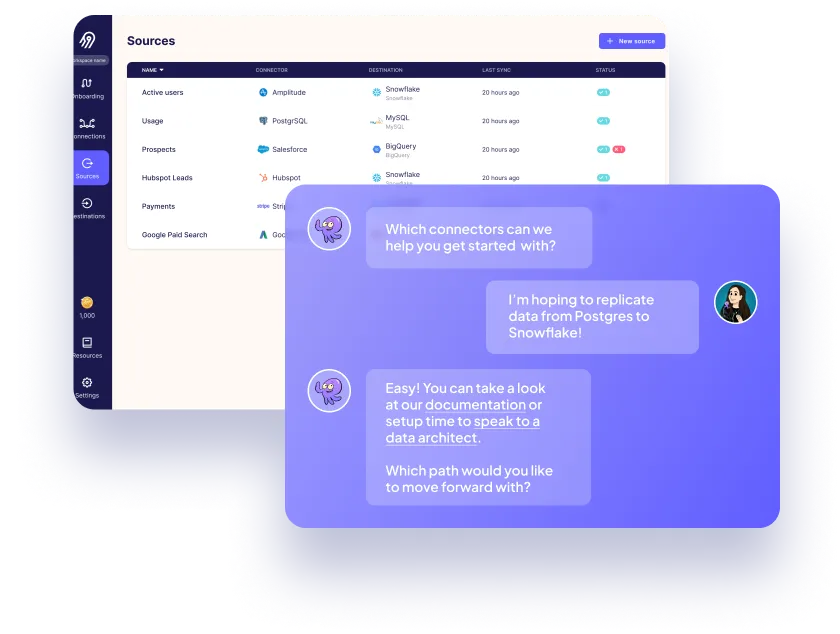

Cover your custom needs with our extensibility

Free your time from maintaining connectors, with automation

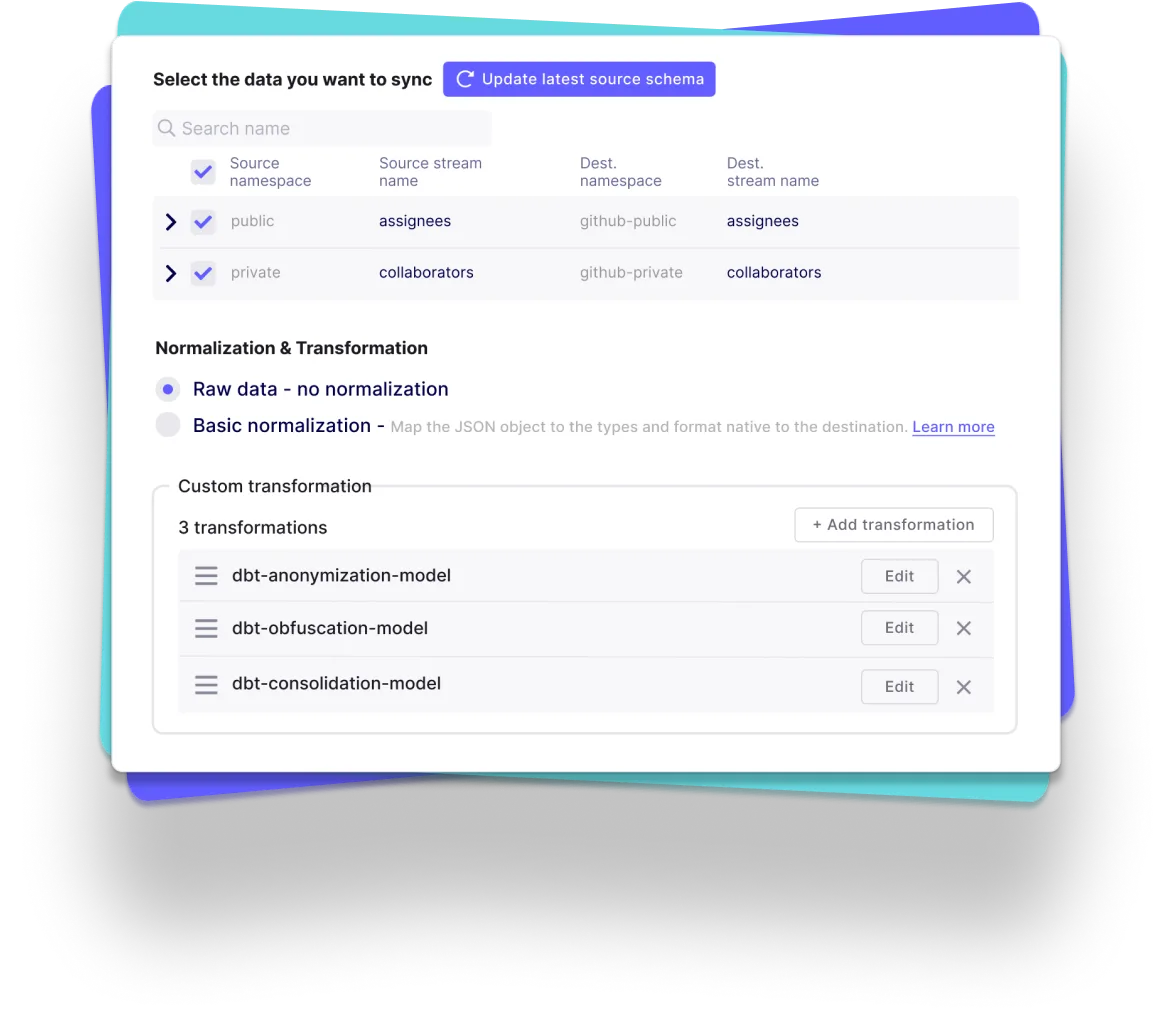

- Automated schema change handling, data normalization and more

- Automated data transformation orchestration with our dbt integration

- Automated workflow with our Airflow, Dagster and Prefect integration

Reliability at every level

Airbyte Open Source

Airbyte Cloud

Airbyte Enterprise

Why choose Airbyte as the backbone of your data infrastructure?

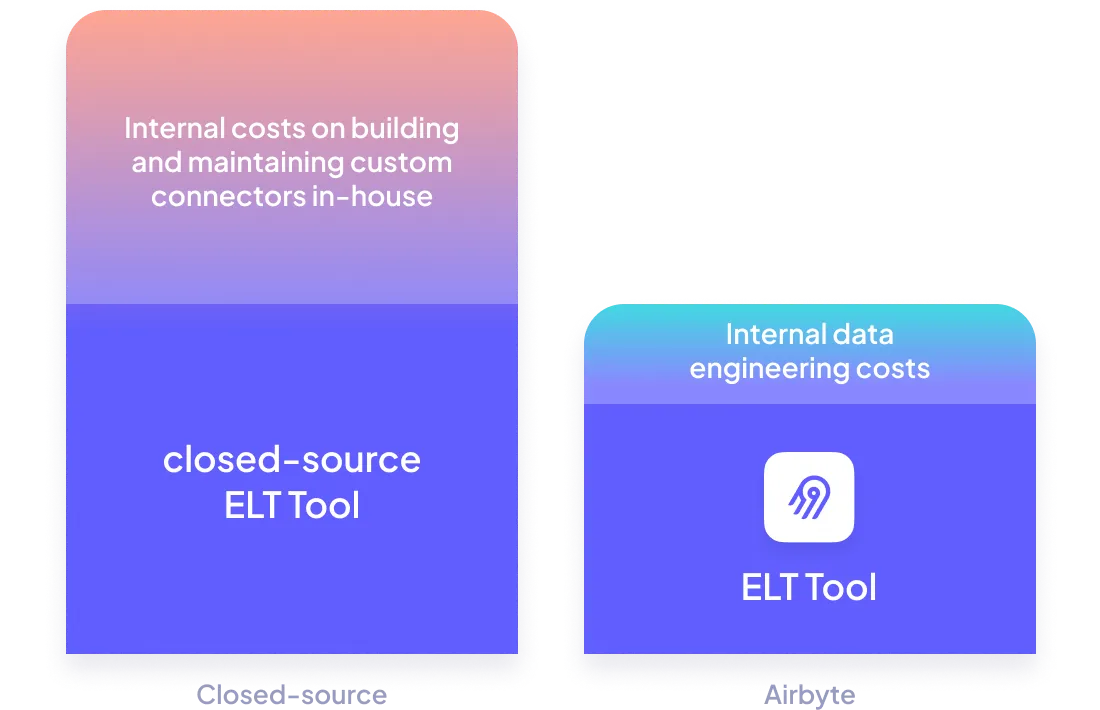

Keep your data engineering costs in check

Get Airbyte hosted where you need it to be

- Airbyte Cloud: Have it hosted by us, with all the security you need (SOC2, ISO, GDPR, HIPAA Conduit).

- Airbyte Enterprise: Have it hosted within your own infrastructure, so your data and secrets never leave it.

White-glove enterprise-level support

Including for your Airbyte Open Source instance with our premium support.

Fnatic, based out of London, is the world's leading esports organization, with a winning legacy of 16 years and counting in over 28 different titles, generating over 13m USD in prize money. Fnatic has an engaged follower base of 14m across their social media platforms and hundreds of millions of people watch their teams compete in League of Legends, CS:GO, Dota 2, Rainbow Six Siege, and many more titles every year.

Ready to get started?

FAQs

What is ETL?

ETL, an acronym for Extract, Transform, Load, is a vital data integration process. It involves extracting data from diverse sources, transforming it into a usable format, and loading it into a database, data warehouse or data lake. This process enables meaningful data analysis, enhancing business intelligence.

To sync data the Zenloop API can assist both full refresh and incremental for both answer endpoints. One can select this connector that will copy only the new or updated data, or all rows in the tables and columns you establish for replication, a sync is always run. Zenloop combines perfect customer relationships and it is an integrated experience management floor which based on the Net Promoter Score. The Zenloop API contributes programmatic entry and integration to a customer feeback platform.

Zenloop's API provides access to various types of data related to customer feedback and satisfaction. The categories of data that can be accessed through Zenloop's API are:

1. Feedback data: This includes all the feedback received from customers through various channels such as email, web forms, and social media.

2. Customer data: This includes information about customers such as their name, email address, phone number, and other contact details.

3. Survey data: This includes data related to surveys conducted by the company to gather feedback from customers.

4. Net Promoter Score (NPS) data: This includes data related to the NPS score of the company, which is a measure of customer satisfaction and loyalty.

5. Sentiment analysis data: This includes data related to the sentiment of customer feedback, which can help companies understand the overall sentiment of their customers towards their products or services.

6. Analytics data: This includes data related to customer behavior, such as the number of visits to the company's website, the time spent on the website, and the pages visited.

Overall, Zenloop's API provides access to a wide range of data that can help companies gain insights into customer feedback and satisfaction, and make data-driven decisions to improve their products and services.

What is ELT?

ELT, standing for Extract, Load, Transform, is a modern take on the traditional ETL data integration process. In ELT, data is first extracted from various sources, loaded directly into a data warehouse, and then transformed. This approach enhances data processing speed, analytical flexibility and autonomy.

Difference between ETL and ELT?

ETL and ELT are critical data integration strategies with key differences. ETL (Extract, Transform, Load) transforms data before loading, ideal for structured data. In contrast, ELT (Extract, Load, Transform) loads data before transformation, perfect for processing large, diverse data sets in modern data warehouses. ELT is becoming the new standard as it offers a lot more flexibility and autonomy to data analysts.