Setup in 3 easy steps

Start moving your data securely and reliably to any destination.

Choose Destination

Select from 50+ data warehouses, lakes, or databases to load your extracted data.

Configure Connection

Select streams, sync frequency, and sync modes (Full Refresh or Incremental).

Why Airbyte?

Airbyte is the only unified data movement platform built on an open standard, uniquely positioned for connector extensibility and AI workflows.

Syncing data from is only one of your 1,000 future data pipeline needs.

Leverage the largest Marketplace of 600+ pre-built connectors. Join 2,000 + data engineers who built 7,000+ custom connectors in minutes with low-code/no-code Connector Builder or AI Assistant.

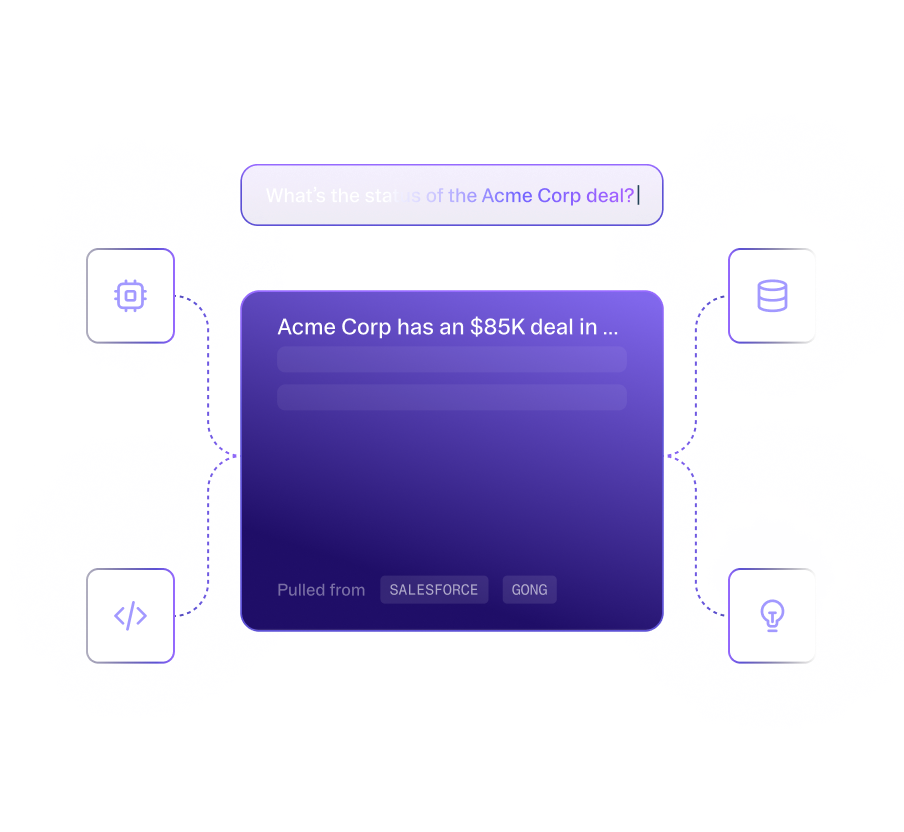

Create context for AI agents

Airbyte's pipelines transfer structured and unstructured data together for metadata preservation. With support for flexible destinations such as Iceberg, Airbyte is the ideal data movement solution for agentic applications.

Any specific way you would like to sync data from ? Airbyte has you covered.

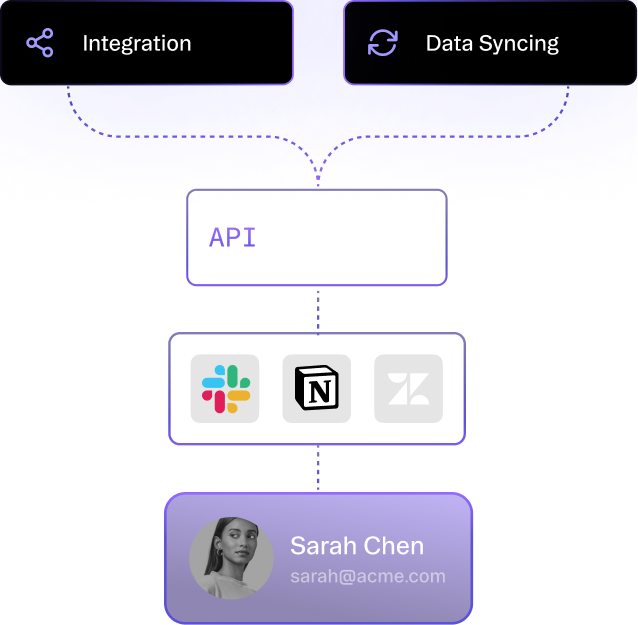

UI: Create connections and custom connectors in minutes.

API: Programmatic interactions, data syncing, and embedded connectors.

Terraform: Integration with CI/CD tools and rapid deployment with Infrastructure as Code.

PyAirbyte: Build LLM applications with Python libraries, SQL tools, and AI frameworks.

Flexible deployment options: self-hosted, cloud, and hybrid

Secure and compliant: ISO 27001, SOC 2, GDPR, HIPAA, data encryption, audit/monitoring, SSO, RBAC, and more. Centralized multi-tenant management with self-serve capabilities.

Trusted by AI and Data leaders

Whether you're building Agents or Data Pipelines.